introduction

Zhipu AI has launched GLM-4.6, the newest mannequin within the Basic Language Mannequin (GLM) collection. In contrast to many proprietary Frontier methods, the GLM household stays openweight and licensed underneath permissive phrases similar to MIT and Apache, making it one of many solely Frontier scale fashions that organizations can self-host.

GLM-4.6 builds on the reasoning and coding strengths of GLM-4.5 and introduces a number of main upgrades.

The context window expands from 128k to 200k tokens, permitting the mannequin to deal with evaluation duties for total books, codebases, or a number of paperwork in a single cross.

We keep an skilled combine structure with a complete of 355 billion parameters and roughly 32 billion lively per token, however with improved high quality of inference, accuracy of coding, and reliability of device calls.

New modes of considering enhance multi-step reasoning and sophisticated planning.

This mannequin helps native device calls and permits you to determine when to name exterior features or companies.

All weights and code are overtly obtainable, permitting for self-hosting, fine-tuning, and enterprise customization.

These upgrades make GLM-4.6 a robust open various for builders who require high-performance coding help, lengthy context evaluation, and agent workflows.

Mannequin structure and technical particulars

Knowledgeable combined core

GLM-4.6 is constructed on the Combination-of-Consultants (MoE) Transformer structure. The entire mannequin accommodates 355 billion parameters, however as a result of the skilled routing is sparse, solely about 32 billion are lively on every ahead cross. The gating community selects the suitable skilled for every token, lowering computational overhead whereas retaining the advantages of a giant parameter pool.

Key architectural options inherited from GLM-4.5 and refined in model 4.6 embody:

Grouped question consideration. Enhance long-range interactions through the use of a number of consideration heads and partial RoPE for environment friendly scaling.

QK-Norm stabilizes the eye logit by normalizing the interplay of queries and keys.

Muon Optimizer. Improve batch measurement and permit sooner convergence.

Multi-token prediction head. Predict a number of tokens per step to enhance the mannequin’s considering mode efficiency.

Hybrid inference mode

GLM-4.6 helps two modes of inference.

Commonplace mode offers quick responses for on a regular basis interactions.

Assume mode slows down decoding, makes use of the MTP head for multi-token planning, and generates inside thought chains. This mode improves efficiency for logic issues, lengthy coding duties, and multi-step agent workflows.

Prolonged context window

Probably the most vital upgrades is the expanded context window. Transferring from 128,000 tokens to 200,000 tokens permits GLM-4.6 to deal with giant codebases, full authorized paperwork, lengthy transcripts, or multi-chapter content material with out chunking. This function is very precious for engineering duties, analysis evaluation, and long-form summaries.

Coaching knowledge and fine-tuning

Though Zhipu AI doesn’t publish the complete coaching dataset, GLM-4.6 is constructed on the inspiration of GLM-4.5, which is pre-trained on trillions of various tokens and closely fine-tuned on code, inference, and tuning duties. Reinforcement studying enhances the accuracy of coding, the standard of inference, and the reliability of device use. GLM-4.6 seems to incorporate further knowledge for device calls and agent workflows resulting from improved planning capabilities.

Software calls and agent features

GLM-4.6 is designed to function a management system for autonomous brokers. Helps structured perform calls and decides when to name instruments primarily based on context. Its inside reasoning improves argument validation, error rejection, and multitool planning. In our coding assistant analysis, GLM-4.6 achieves a excessive device invocation success price, approaching the efficiency of high proprietary fashions.

Effectivity and quantization

Though GLM-4.6 is giant, its MoE structure retains lively parameters manageable. Public weights can be found in BF16 and FP32, and neighborhood quantization in 4- to 8-bit format permits fashions to run on extra reasonably priced GPUs. It is appropriate with common inference frameworks similar to vLLM, SGLang, and LMDeploy, giving your workforce versatile deployment choices.

benchmark efficiency

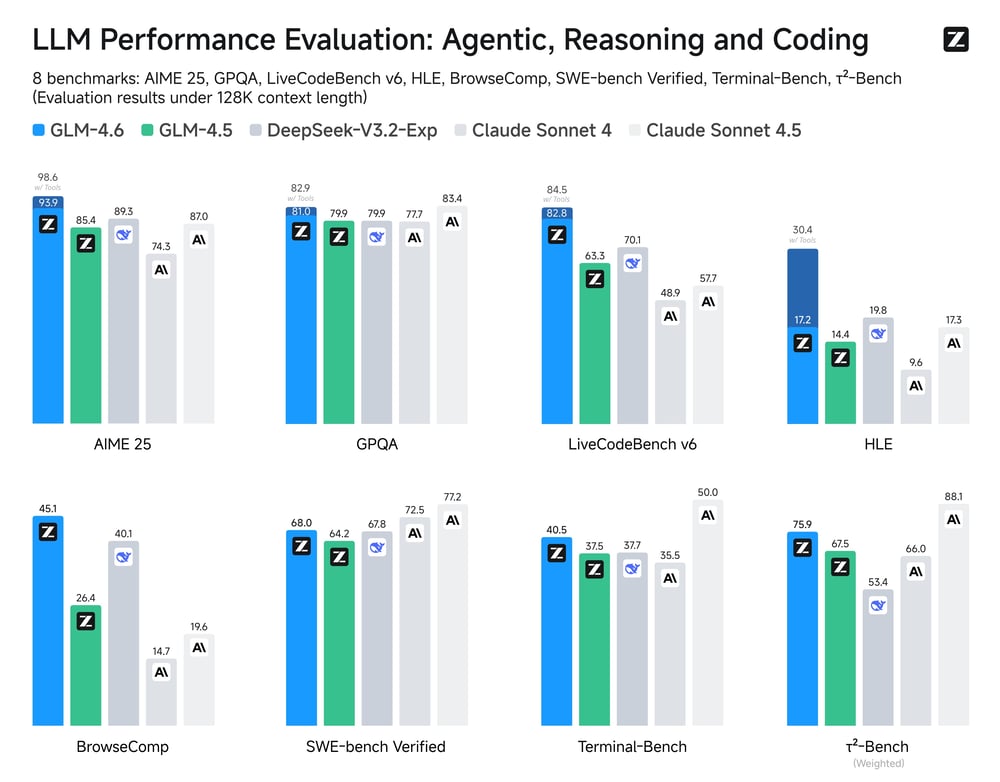

Zhipu AI evaluated GLM-4.6 on quite a lot of benchmarks protecting inference, coding, and agent duties. It reveals constant enchancment over GLM-4.5 in most classes and aggressive efficiency in comparison with high-end proprietary fashions similar to Claude Sonnet 4.

In precise coding evaluations, GLM-4.6 achieved outcomes roughly corresponding to our personal mannequin whereas utilizing fewer tokens per activity. It additionally reveals efficiency enhancements in tool-enhanced inference and multi-turn coding workflows, making it one of the vital highly effective open fashions obtainable right now.

License and openness

GLM-4.6 is launched underneath permissive licenses similar to MIT and Apache, permitting unrestricted industrial use, self-hosting, and tweaking. Builders can obtain each the essential and educational variations to combine into their very own infrastructure. This openness is in distinction to proprietary fashions like Claude and GPT, that are solely obtainable by way of paid APIs.

Entry GLM-4.6 by way of API

GLM-4.6 is obtainable on the Clarifai platform and might be accessed by way of API utilizing OpenAI appropriate endpoints.

Step 1: Create a Clarifai account and get a private entry token (PAT)

Signal as much as generate a private entry token. You may as well check GLM-4.6 by choosing a mannequin and making an attempt coding, inference, or agent prompts within the Clarifai Playground.

Step 2: Arrange your atmosphere

Step 3: Name GLM-4.6 by way of API

Step 4: Use TypeScript or JavaScript

You may as well entry GLM 4.6 by way of the API utilizing different languages similar to Node.js and cURL. Take a look at all of the examples right here.

Instance of utilizing GLM-4.6

Superior coding help

GLM-4.6 considerably improves code era accuracy and effectivity. Generates top quality code whereas utilizing fewer tokens than GLM-4.5. In human analysis, its coding skill approaches that of the distinctive Frontier mannequin. This makes it appropriate for full-stack growth assistants, automated code evaluations, bug fixing brokers, and repository-level evaluation.

Orchestrate agent workflows and instruments

GLM-4.6 is constructed for tool-enhanced inference. You may plan multi-step duties, name exterior APIs, see outcomes, and keep state throughout interactions. This allows autonomous coding brokers, analysis assistants, and sophisticated workflow automation methods that depend on structured device calls.

Lengthy context doc evaluation

With a window of 200,000 tokens, this mannequin can learn and purpose about total books, authorized paperwork, technical manuals, or hours of information. Helps compliance evaluations, consolidating a number of paperwork, summarizing lengthy texts, and understanding codebases.

Bilingual growth and inventive writing

The mannequin is educated in each Chinese language and English and performs effectively on bilingual duties. Helpful for translation, localization, bilingual code documentation, and inventive writing duties that require pure fashion and voice.

Enterprise-grade deployment and customization

Because of the open license and versatile MoE structure, organizations can self-host GLM-4.6 on non-public clusters, fine-tune their very own knowledge, and combine with inside instruments. Neighborhood quantization additionally permits for extra light-weight deployment on restricted {hardware}. Clarifai offers a cloud-hosted various route for groups that want API entry with out managing the infrastructure.

conclusion

GLM-4.6 is a serious milestone in open AI growth. It combines a large-scale MoE structure, a 200k token context window, a hybrid inference mode, and native device calls to ship efficiency corresponding to proprietary Frontier fashions. We’re bettering GLM-4.5 throughout coding, reasoning, and gear extension duties whereas remaining utterly open and self-hostable.

Whether or not you are constructing autonomous coding brokers, analyzing giant doc units, or orchestrating advanced multi-tool workflows, GLM-4.6 offers a versatile, high-performance basis with no vendor lock-in.