On this article, discover ways to determine, perceive, and mitigate race situations in multi-agent orchestration techniques.

Subjects coated embrace:

What race situations seem like in a multi-agent atmosphere. Architectural patterns for stopping shared state races. Sensible methods corresponding to idempotency, locking, and concurrency testing.

Let’s get straight to the purpose.

Dealing with race situations in multi-agent orchestration

Picture by editor

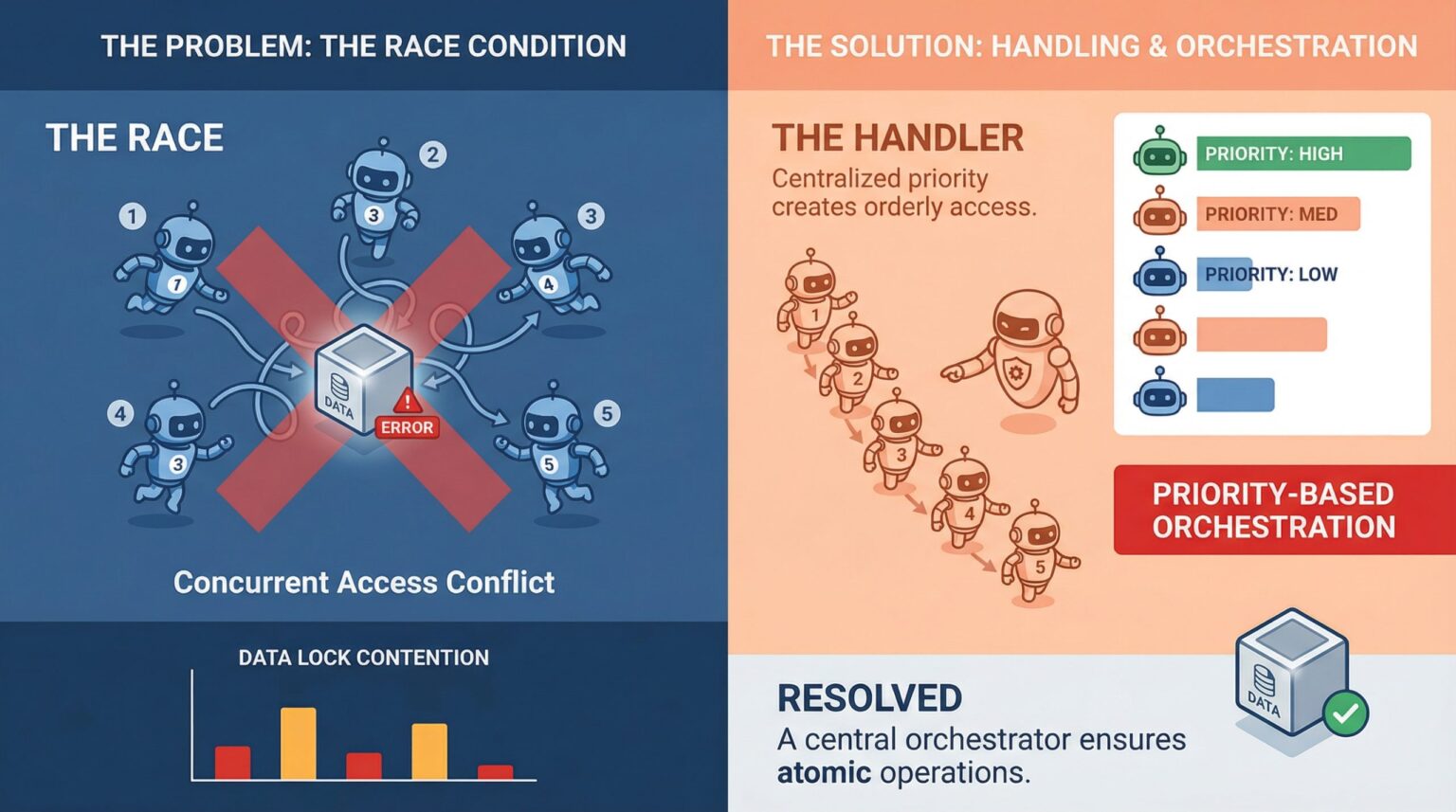

Should you’ve ever seen two brokers confidently write to the identical useful resource on the identical time, producing one thing utterly meaningless, you already know what a race situation truly seems to be like. That is a type of bugs that does not present up in unit assessments, works completely in staging, after which explodes in manufacturing throughout peak visitors hours.

In multi-agent techniques the place parallel execution is necessary, race situations will not be edge circumstances. They’re scheduled visitors. Understanding the right way to cope with them is much less about constructing defenses and extra about constructing techniques that count on disruption by default.

What race situations truly seem like in multi-agent techniques

A race situation happens when two or extra brokers try to learn, modify, or write shared state on the identical time, and the ultimate outcome will depend on who will get there first. In a single-agent pipeline, that is manageable. A system with 5 brokers working concurrently is a very totally different downside.

The problem is {that a} race situation just isn’t all the time an apparent crash. Typically they’re silent. Agent A reads the doc, agent B updates it after 0.5 seconds, and agent A writes again the outdated model with out throwing an error anyplace. The system appears high-quality. Knowledge is compromised.

What makes this worse, particularly in machine studying pipelines, is that brokers typically function on mutable shared objects, corresponding to shared reminiscence shops, vector databases, device output caches, and easy process queues. Any of those can change into rivalry factors if a number of brokers begin pulling on the identical time.

Why multi-agent pipelines are particularly susceptible

Conventional concurrent programming has included instruments for coping with race situations for many years, together with threads, mutexes, semaphores, and atomic operations. Multi-agent large-scale language mannequin (LLM) techniques are new and sometimes constructed on high of asynchronous frameworks, message brokers, and orchestration layers that do not all the time have fine-grained management over execution order.

There’s additionally the problem of non-determinism. LLM brokers don’t all the time take the identical period of time to finish duties. One agent might end in 200ms whereas one other takes 2 seconds, and the orchestrator should deal with that appropriately. In any other case, the brokers will begin entering into one another and you’ll find yourself in a corrupted state or write conflicts that the system will silently settle for.

Once more, the agent’s communication sample is essential. If brokers share state by way of central objects or shared database rows fairly than passing messages, giant write contentions are virtually assured. That is as a lot a design sample concern as it’s a concurrency concern, and fixing this concern usually begins on the architectural stage earlier than touching the code.

Locking, queuing, and event-driven design

Probably the most direct method to deal with rivalry for shared assets is to make use of locks. Optimistic locking works effectively when conflicts are uncommon. Every agent reads the model tag together with the information, and if the model has modified by the point the write is tried, the write will fail and be retried. Pessimistic locking is extra aggressive and reserves assets earlier than studying. Each approaches have trade-offs, and which one is healthier will depend on how typically the brokers truly collide.

Queuing is one other dependable strategy, particularly in process task. As an alternative of a number of brokers polling a shared process checklist instantly, duties are pushed right into a queue and brokers can devour them separately. Methods like Redis Streams, RabbitMQ, or fundamental Postgres advisory locks can deal with this effectively. Queues change into serialization factors and take rivalry out of the equation for sure entry patterns.

Occasion-driven architectures will proceed to evolve. Brokers react to occasions fairly than studying from shared state. Agent A completes the work and publishes an occasion. Agent B listens to that occasion and will get a response from it. This loosens the coupling and naturally reduces the overlap window during which two brokers can change the identical factor on the identical time.

Idempotency is your greatest good friend

Even with robust locking and queuing in place, issues can nonetheless happen. The community goes down, a timeout happens, and the agent retries the failed operation. If these retries will not be idempotent, duplicate writes, double processing duties, or compound errors will happen, making debugging after the very fact troublesome.

Idempotency signifies that performing the identical operation a number of occasions produces the identical outcome as performing it as soon as. For brokers, this typically means together with a novel operation ID for every write. If the operation has already been utilized, the system acknowledges the ID and skips the duplicate. It is a small design alternative, nevertheless it has a big effect on reliability.

It is price constructing idempotency on the agent stage from the start. It’s a problem to put in it later. Any agent that writes to a database, updates information, or triggers downstream workflows should implement some type of deduplication logic. This enables the complete system to be extra resilient to real-world execution disruptions.

Take a look at for race situations earlier than they’re examined

The troublesome a part of race situations is reproducing them. These are timing dependent. That’s, it typically solely happens beneath load or in sure execution sequences which are troublesome to breed in a managed check atmosphere.

One helpful strategy is stress testing with intentional concurrency. Begin a number of brokers concurrently towards a shared useful resource and see what breaks. Instruments like Locust, pytest-asyncio with concurrent duties, or perhaps a easy ThreadPoolExecutor may also help you simulate the sort of duplicate executions that reveal race bugs in a staging atmosphere fairly than a manufacturing atmosphere.

Property-based testing is underutilized on this context. Should you can outline invariants that should all the time maintain no matter execution order, you’ll be able to carry out randomized assessments that violate them. Though it will not detect the whole lot, it can floor many refined consistency points {that a} deterministic check would utterly miss.

Examples of particular race situations

It will aid you make this concrete. Contemplate a easy shared counter that a number of brokers replace. This might symbolize one thing actual, corresponding to monitoring what number of occasions a doc has been processed or what number of duties have been accomplished.

Under is a minimal model of the issue in pseudocode.

# shared state counter = 0 # agent process def increment_counter(): world counter worth = counter # step 1: learn worth = worth + 1 # step 2: change counter = worth # step 3: write

# shared state

counter = 0

# agent process

certainly increment counter():

world counter

worth = counter # Step 1: Learn

worth = worth + 1 # Step 2: Change

counter = worth # Step 3: Write

Now think about two brokers doing this on the identical time.

Agent A learn counter = 0 Agent B learn counter = 0 Agent A write counter = 1 Agent B write counter = 1

I anticipated the ultimate worth to be 2, however as an alternative it was 1. There are not any errors or warnings, simply an incorrect state. It is a race situation in its easiest type.

There are a number of methods to mitigate this relying in your system design.

Possibility 1: Lock the vital part

Probably the most direct repair is to permit just one agent at a time to change the shared useful resource. That is proven in pseudo code.

lock.purchase() worth = counter worth = worth + 1 counter = worth lock.launch()

rock.get()

worth = counter

worth = worth + 1

counter = worth

rock.launch()

This ensures accuracy, however at the price of diminished parallelism. If many brokers are contending for a similar lock, throughput can drop rapidly.

Possibility 2: Atomic operations

Atomic updates are a cleaner answer in case your infrastructure helps it. As an alternative of breaking operations into learn, modify, and write steps, they delegate operations to the underlying system.

counter = atomic_increment(counter)

counter = atomic_increment(counter)

Databases, key/worth shops, and a few in-memory techniques make this available. Fully removes race by making the replace indivisible.

Possibility 3: Idempotent writing with model management

One other strategy is to make use of model management to detect and reject conflicting updates.

# Learn by model worth, model = read_counter() # Try to jot down, success = write_counter(worth + 1, Expected_version=model) If not profitable: retry()

# learn by specifying model

worth, model = learn counter()

# strive writing

success = write_counter(worth + 1, Anticipated model=model)

if wouldn’t have success:

retry()

That is truly an optimistic lock. If one other agent updates the counter first, the write will fail and be retried with a brand new state.

In actual multi-agent techniques, “counters” are not often this easy. It could possibly be a doc, a reminiscence retailer, or a workflow state object. However the sample is similar. Splitting reads and writes into a number of steps creates a window the place one other agent can intervene.

Closing that window by way of locks, atomic operations, or race detection is the core of dealing with actual race situations.

last ideas

Race situations in multi-agent techniques are manageable, however require intentional design. The techniques which are capable of deal with this stuff aren’t simply fortunate with their timing. These assume that concurrency will trigger issues and plan accordingly.

Idempotent operations, event-driven communication, good locks, and correct queue administration will not be overdesigned. These are baselines for pipelines the place brokers are anticipated to work in parallel with out interfering with one another. When you get these fundamentals proper, the remainder turns into rather more predictable.