For a few years, the best way giant language fashions deal with inference has been actually locked inside a field. The high-bandwidth RDMA networks that energy fashionable LLM companies confine each prefill and decoding to the identical information heart and, in some instances, the identical rack. A crew of researchers from Moonshot AI and Tsinghua College argue that this constraint is about to be lifted, and that the correct structure can already reap the benefits of the change.

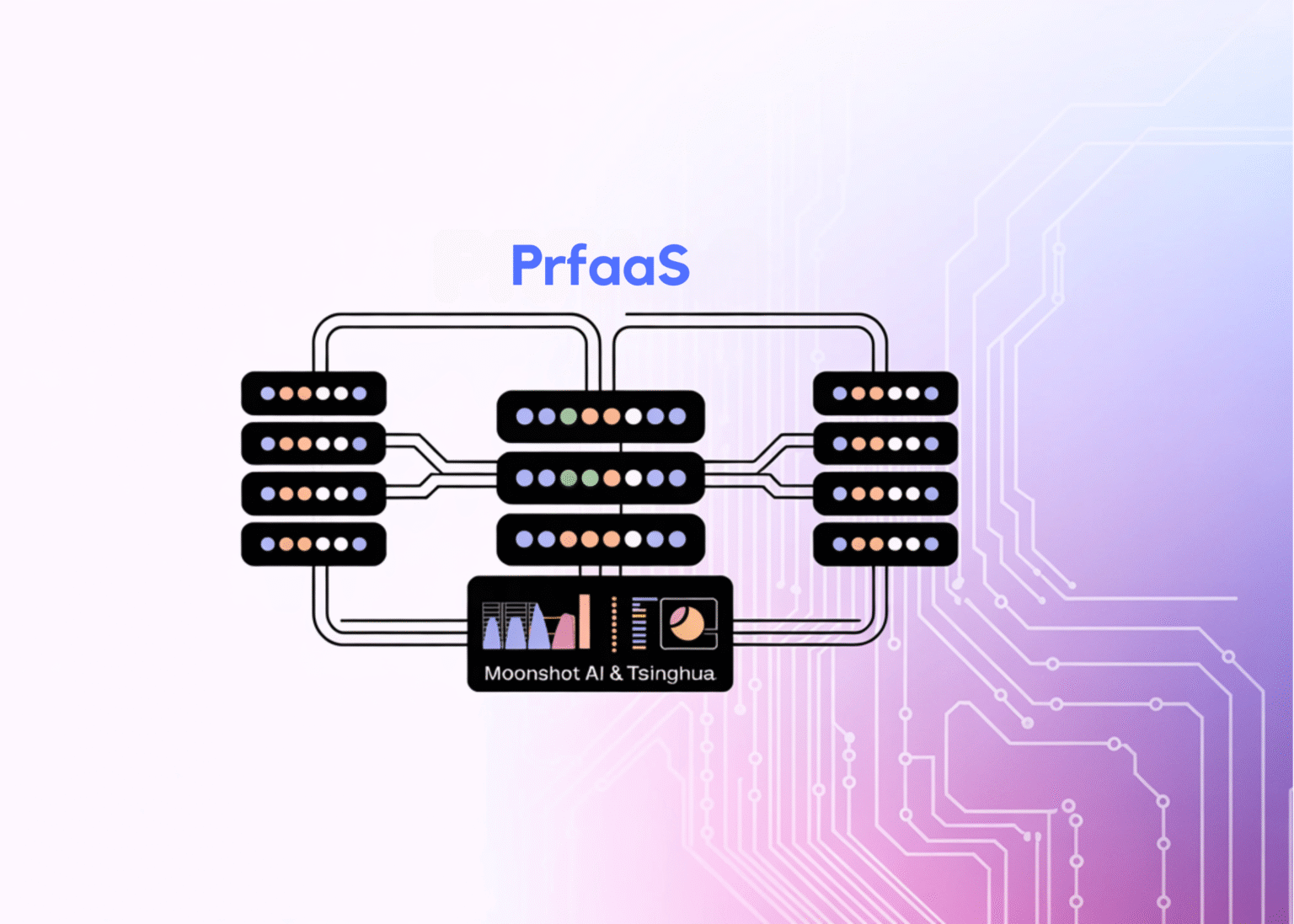

The analysis crew introduces Prefill-as-a-Service (PrfaaS), a cross-datacenter serving structure that selectively offloads long-context prefills to standalone compute-dense prefill clusters and forwards the ensuing KVCache over commodity Ethernet to native PD clusters for decoding. Because of this, our case research utilizing a hybrid mannequin with inside 1T parameters achieved serving throughput that was 54% greater than the homogeneous PD baseline and 32% greater than the straightforward heterogeneous setup. Alternatively, it consumed solely a fraction of the out there inter-datacenter bandwidth. The researchers notice that the throughput enchancment is about 15% compared at comparable {hardware} prices. This displays that the whole 54% profit is partially gained by combining the high-compute H200 GPU for prefill with the H20 GPU for decoding.

Why the present structure hit a wall

To grasp what PrfaaS solves, it helps to grasp why LLM companies are divided into two phases within the first place. Prefilling is a computationally intensive step the place the mannequin processes all enter tokens and generates a KVCache. Throughout decoding, the mannequin generates output tokens one after the other. This consumes numerous reminiscence bandwidth. Prefill decode (PD) decomposition separates these two phases into totally different {hardware}, bettering utilization and permitting every part to be optimized independently.

The issue is that separating prefill and decoding introduces transport points. After you run prefill on one set of machines and decode on one other set, it is advisable switch the KVCache generated by prefill to the decoding facet earlier than you can begin producing output. In a conventional dense consideration mannequin (one which makes use of grouped question consideration (GQA)), this KVCache is large. The analysis crew benchmarked a consultant high-density mannequin, MiniMax-M2.5, with GQA, producing roughly 60 Gbps of KVCache for a 32K token request on a single 8×H200 occasion. Processing this quantity of information requires RDMA class interconnects for non-disruptive switch of computing. Subsequently, conventional PD partitioning is tightly tied to a single datacenter-wide community material. Shifting prefill and decode to a different cluster is solely not possible, a lot much less between information facilities.

Hybrid consideration adjustments arithmetic

What makes PrfaaS so well timed is the architectural adjustments occurring on the mannequin degree. There’s a rising class of fashions reminiscent of Kimi Linear, MiMo-V2-Flash, Qwen3.5-397B, and Ring-2.5-1T which can be hybrid consideration interleaving a small variety of full consideration layers with a lot of linear complexity or bounded state layers reminiscent of Kimi Delta Consideration (KDA), Multihead Latent Consideration (MLA), and Sliding Window Consideration (SWA). Stack is used. In these architectures, solely the total consideration layer generates a KVCache that scales with sequence size. Linear complexity layers preserve fixed-size iterations whose footprint is negligible in lengthy contexts.

The KV throughput numbers (outlined as KVCache dimension divided by prefill latency) clearly communicate for themselves. With 32K tokens, MiMo-V2-Flash generates KVCache at 4.66 Gbps, whereas MiniMax-M2.5 produces 59.93 Gbps, a 13x discount. Qwen3.5-397B reaches 8.25 Gbps, which is 1 / 4 of the Qwen3-235B’s 33.35 Gbps. Particularly, within the case of Ring-2.5-1T, the paper financial savings impact is decomposed. MLA contributes roughly 4.5x compression over GQA, and the 7:1 hybrid ratio contributes a further roughly 8x discount, leading to an total KV reminiscence financial savings of roughly 36x. For the inner 1T mannequin used within the case research, the KV throughput at 32,000 tokens is barely 3.19 Gbps, which is the extent that fashionable information heart to information heart Ethernet hyperlinks can realistically maintain.

However the analysis crew is cautious to make a distinction that’s necessary for AI builders constructing real-world programs. In different phrases, a smaller KVCache is important, however not ample to make PD partitioning between datacenters sensible. Actual-world workloads are bursty, request lengths are skewed, prefix caches are inconsistently distributed throughout nodes, and bandwidth between clusters varies. A easy design that routes all prefills to distant clusters nonetheless ends in congestion and unstable queues.

What PrfaaS really does

The PrfaaS-PD structure sits on prime of three subsystems: compute, community, and storage. Computing subsystems classify clusters into two varieties. One is a neighborhood PD cluster that handles end-to-end inference for brief requests, and the opposite is a PrfaaS cluster with excessive compute throughput accelerators devoted to prefilling lengthy contexts. The community subsystem makes use of intracluster RDMA for quick native transfers and commodity Ethernet for intercluster KVCache transfers. The storage subsystem builds a distributed hybrid prefix cache pool that handles linear consideration recurrent state (request degree, mounted dimension, precise match solely) and full consideration KVCache blocks (block degree, linearly rising with enter size, helps partial prefix matching) in separate teams backed by a unified block pool.

The first routing mechanism is length-based threshold routing. Let l characterize the incremental prefill size of the request after subtracting the cached prefix and the ta routing threshold. If l > t, the request goes to the PrfaaS cluster and its KVCache is distributed over Ethernet to the decoding node. If l ≤ t, it stays on the native PD path. On this case research, the optimum threshold is t = 19.4K tokens, which routes roughly 50% of all requests (lengthy requests) to the PrfaaS cluster.

The truth is, low KV throughput will not be sufficient to extend the reliability of the Ethernet path. The analysis crew specifies three particular transport mechanisms. One is a per-layer prefill pipeline to overlap KVCache technology and transmission, a multi-connection TCP transport to take advantage of the out there bandwidth, and congestion monitoring built-in with a scheduler to detect misplaced and retransmitted indicators early and stop congestion build-up.

Along with this, the analysis crew launched a twin timescale scheduler. Over quick timescales, we monitor output utilization and queue depth in PrfaaS and regulate routing because the hyperlink approaches its bandwidth restrict. It additionally handles cache affine routing. If bandwidth is inadequate, every cluster’s prefix cache is evaluated individually. When bandwidth is plentiful, the scheduler considers the most effective cached prefixes throughout all clusters and performs intercluster cache transfers if it reduces redundant computations. On longer timescales, as site visitors patterns change, the scheduler re-adjusts the variety of prefill and decode nodes within the native PD cluster to maintain the system close to the throughput-optimal working level.

numbers

On this case research, a PrfaaS cluster of 32 H200 GPUs is paired with a neighborhood PD cluster of 64 H20 GPUs and linked by a VPC community that gives roughly 100 Gbps of intercluster bandwidth. The full output load of PrfaaS within the optimum configuration is roughly 13 Gbps, which represents solely 13% of the out there Ethernet capability. The paper states that PrfaaS clusters are nonetheless compute dependent and have loads of bandwidth. This research additionally applies this to large-scale deployments. Even at a datacenter dimension of 10,000 GPUs, the entire output bandwidth required for KVCache transfers is barely about 1.8 Tbps, nicely inside the capability of contemporary inter-datacenter hyperlinks.

In comparison with the uniform baseline, imply time to first token (TTFT) was diminished by 50% and P90 TTFT was diminished by 64%. A easy heterogeneous configuration with no routing or scheduling logic, all prefilling with H200, and decoding with all H20, achieves just one.16x throughput on the homogeneous baseline in comparison with 1.54x for the total PrfaaS-PD system. The hole between 1.16 and 1.54 instances separates the contribution of the scheduling layer and exhibits that it accounts for a lot of the precise acquire.

The researchers place PrfaaS as a viable design right now in a hybrid structure mannequin, fairly than a near-term idea, and argue that the case for cross-datacenter PD isolation will solely strengthen because the context window expands, KVCache compression know-how matures, and phase-specific {hardware} reminiscent of NVIDIA’s Rubin CPX for prefilling and LPU-style chips for decoding turns into extra broadly out there.

Please see the paper right here. Additionally, be at liberty to observe us on Twitter. Additionally, do not forget to hitch the 130,000+ ML SubReddit and subscribe to our publication. grasp on! Are you on telegram? Now you can additionally take part by telegram.

Must companion with us to advertise your GitHub repository, Hug Face Web page, product releases, webinars, and extra? Join with us