How highly effective is AI? It is sufficient that AI large Anthropic introduced earlier this month that it will solely make its newest AI mannequin, Claude Mythos Preview, accessible to a restricted variety of firms, not less than for now, resulting from safety issues.

Based on Anthropic, Claude Mythos Preview is designed for common use, however throughout testing the corporate discovered it to be extremely efficient at figuring out vulnerabilities in safety techniques in all forms of software program, doubtlessly posing severe safety issues.

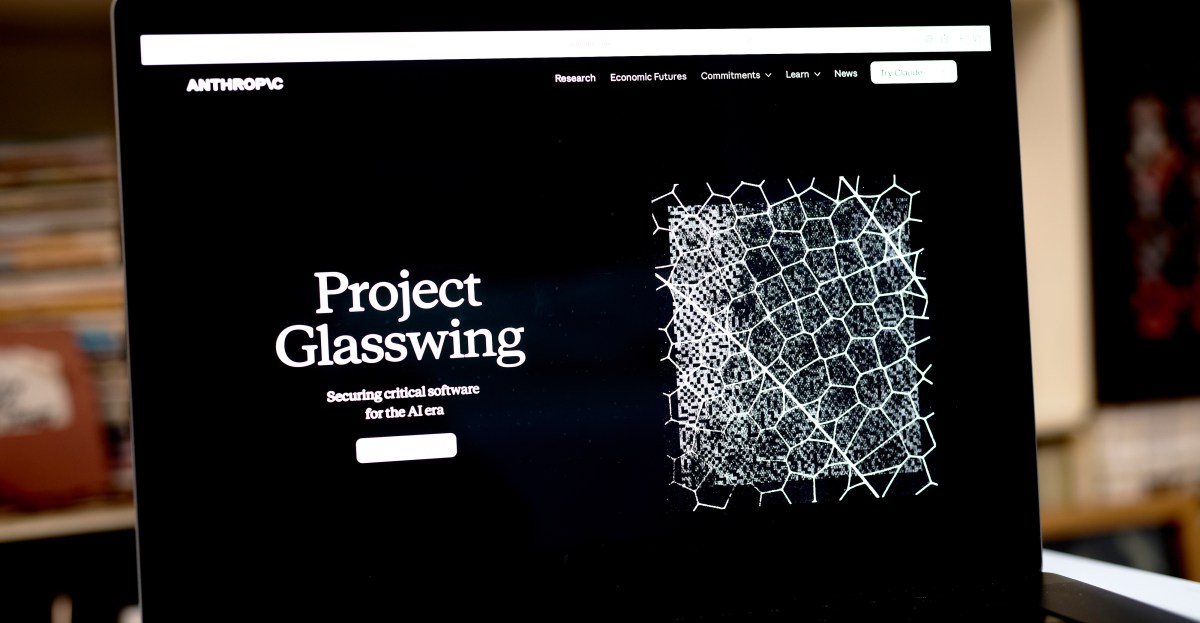

To this point, Anthropic has shared its Mythos Preview mannequin with a handful of enormous know-how firms and banks by means of a program known as Venture Glasswing. That is supposed to supply a possibility to harden current safety vulnerabilities and pre-empt potential hacking makes an attempt that the mannequin can establish.

To higher perceive what Claude Mythos Preview represents and the potential menace it poses to on-line safety, at present Defined co-host Sean Rameswaram spoke with Hayden Discipline, senior AI reporter at The Verge.

Under are excerpts of their dialog, edited for size and readability. Hearken to the total episode on Apple Podcasts, Pandora, Spotify, and wherever you get your podcasts.

Mythos is [Anthropic’s] A contemporary AI mannequin designed to be a general-purpose AI mannequin, similar to every other AI mannequin. However what they realized whereas engaged on it was that it had particular expertise they did not actually anticipate. They had been excellent when it got here to cybersecurity. Excessive-stakes vulnerabilities have been found in just about each working system.

If you happen to use it as a hacker, it is fairly unhealthy. And to create a blueprint for a listing of all the massive gaps, safety issues, vulnerabilities on all these very high-profile techniques, you find yourself creating a listing of all the pieces that may be completed to convey these techniques down or exploit their knowledge.

They realized it was higher to not launch this to the general public as a result of it might fall into the mistaken arms. And as an alternative, we have selectively launched a small variety of organizations which can be chargeable for vital infrastructure in order that they’ll fill within the gaps within the system as an alternative.

You have in all probability heard about most of the firms which can be at the moment implementing and utilizing Claude Mythos. Along with Nvidia, JP Morgan Chase, and Google, there look like dozens of firms that construct or preserve vital software program infrastructure. How does it really work?

They constructed it as a general-purpose mannequin, so it in all probability works like every other mannequin when it comes to utilizing it and inspiring it to flag all vulnerabilities in your system.

Maybe you are in search of a selected area of interest a part of the browser that you simply assume is perhaps susceptible in Google Chrome. Mainly, you inform the mannequin to flag all these very high-profile gaps to you and safety, and then you definitely take it and fill it in your self.

Hackers would really use this in the identical method. If it falls into the mistaken arms, they will say, “Yeah, inform me all of the vulnerabilities right here.” And so they’re making an attempt to deplatform it and use it for one thing heinous. So mainly, who’s driving this technique and what are their motivations?

It is so simple as saying, “Hey, Claude, inform me how this banking system goes to be susceptible.” And Claude thinks about it somewhat bit, and it spits out a bunch of solutions.

And did we all know that the Googles and Nvidias of the world are literally utilizing this know-how?

sure. One of many causes Anthropic launched this was as a result of they needed individuals to precisely report how Mythos labored and what they’d completed to shut any vulnerabilities or gaps within the system. It is about info sharing.

They’re letting these firms use it to check how properly it really works in filling all of those consideration gaps, after which they need to report again to Anthropic on the way it labored.

How does Anthropic select who to share this know-how with?

I really requested them that. They’re mainly in search of cyber defenders and corporations that lots of people depend on, and so they assume that in the event that they get hacked in any method, form, or kind, it’ll be an enormous drawback downstream.

JPMorgan Chase is an effective instance. Anthropic additionally gives this know-how to governments.

Do Anthropic’s opponents have related instruments? Are they maybe growing related instruments?

OpenAI seems to be growing related instruments. Anthropic itself has mentioned it does not assume it is going to keep within the lead for too lengthy. They imagine labs world wide might launch this know-how inside the subsequent three, six, or 12 months.

It appears like it is going to be launched inside the subsequent 12 months. That is why they needed to launch Mythos now. That method, when related forms of know-how grow to be accessible to the general public, maybe in a couple of months, companies and banks can get forward of any potential hacks.

If that is so harmful and there are such a lot of potential dangers, is anybody speaking about not releasing a instrument like this and shutting it down and conserving it inner?

That is a extremely nice query. I am actually glad you requested, as a result of not many individuals ask whether or not AI techniques ought to really be launched or used for particular functions. Presently, we’re seeing lots of one-size-fits-all, throw-everything kind of integration. And in lots of circumstances, AI isn’t the reply to issues.

However on this, individuals are inclined to agree that it is what’s wanted proper now. AI is already on the market, serving to cyber attackers really improve their assaults. And we have seen that pattern intensify over the previous 12 months. Basically, individuals appear to agree that we’d like AI to struggle AI cyberattacks.

This is sort of a medieval fortress, struggle is coming, so add additional stones to construct the fortress partitions larger. That is how I really feel after I speak to specialists about this. They know it is coming. Which means: “Attempt to strengthen your defenses now so that you might be greatest ready.”