As extra firms quickly begin utilizing Gen AI, it is vital to keep away from an enormous mistake that may influence its effectiveness: correct onboarding. Corporations spend money and time coaching new staff to succeed, however when utilizing large-scale language mannequin (LLM) helpers, many firms deal with them like a easy software that wants no introduction.

That is greater than only a waste of assets. That is harmful. Analysis reveals that AI will advance quickly from testing to real-world use in 2024-2025, with practically one-third of firms reporting sharp will increase in utilization and acceptance from the earlier 12 months.

Stochastic programs require governance, not wishful pondering

Not like conventional software program, generative AI is probabilistic and adaptive. It learns from interactions, can drift as information and utilization adjustments, and operates within the grey space between automation and company. Treating it like static software program ignores actuality. With out monitoring and updating, fashions degrade and produce incorrect outputs. It is a phenomenon generally referred to as mannequin drift. Gen AI additionally has no built-in organizational intelligence. A mannequin skilled on web information could write Shakespearean sonnets, however it will not know the escalation path or compliance constraints except you inform it. Regulators and requirements our bodies are starting to push for steering as a result of these programs function dynamically and might hallucinate, mislead, or leak information if left unchecked.

The actual price of skipping onboarding

When LLMs hallucinate, misread tone, expose delicate info, or amplify bias, the fee is tangible.

Misinformation and legal responsibility: A Canadian courtroom has held Air Canada liable after a chatbot on its web site offered incorrect coverage info to passengers. The ruling made clear that firms stay responsible for what their AI brokers say.

Embarrassing Hallucination: In 2025, a syndicated “summer time studying listing” printed by the Chicago Solar-Occasions and Philadelphia Inquirer beneficial books that did not exist. The creator used AI with out correct verification, prompting retraction and termination.

Bias at scale: The primary AI discrimination settlement by the Equal Employment Alternative Fee (EEOC) concerned a hiring algorithm that routinely rejected older candidates, highlighting how unmonitored programs can amplify bias and create authorized dangers.

Information Breach: Samsung quickly banned public AI instruments on company gadgets after an worker pasted confidential code into ChatGPT. It is a mistake that may be prevented with higher insurance policies and coaching.

The message is easy. Unonboarded AI and unmanaged use exposes you to authorized, safety, and reputational dangers.

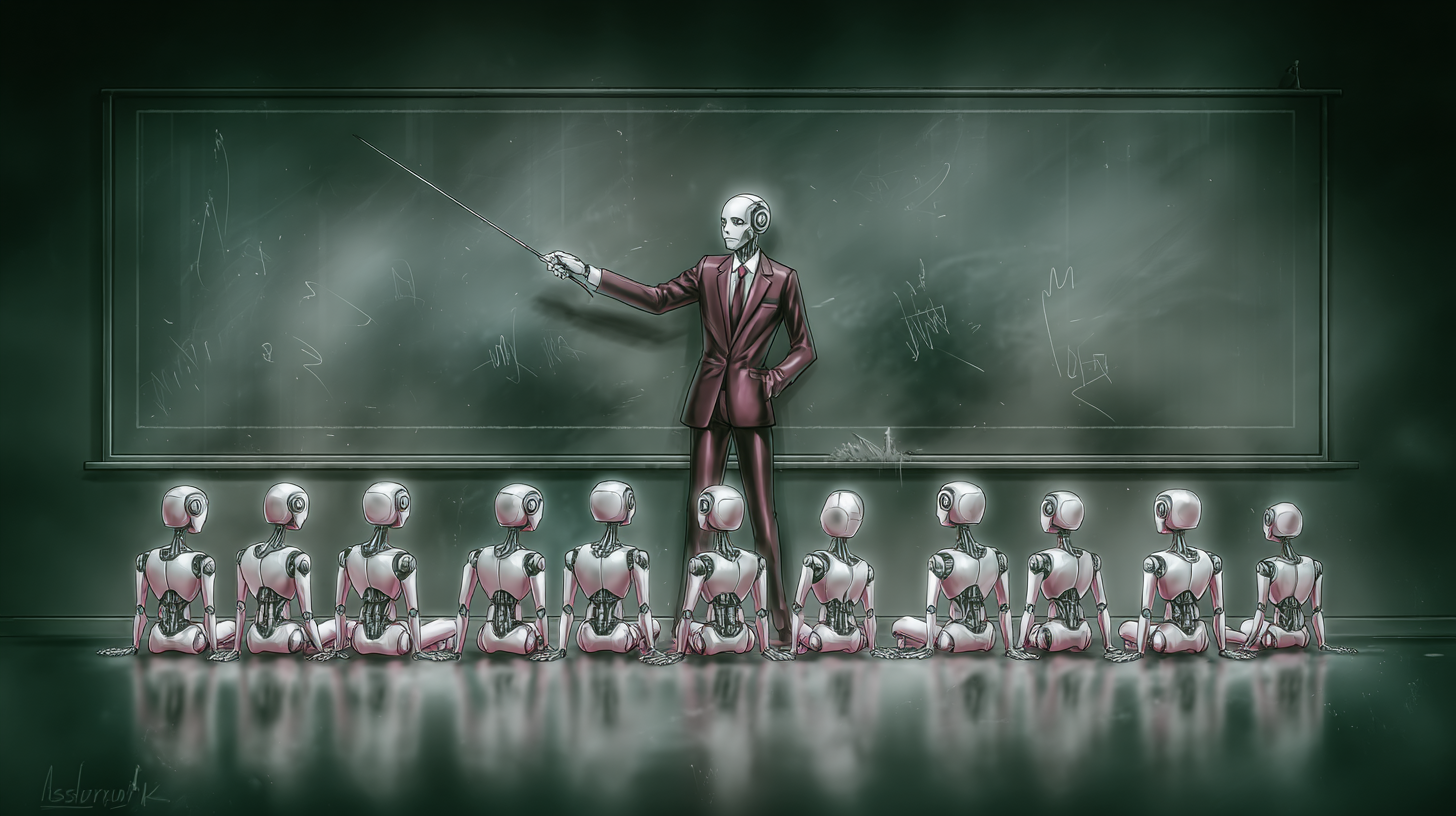

Deal with AI brokers like new staff

Corporations have to onboard AI brokers as rigorously as they’d onboard people, utilizing job descriptions, coaching curricula, suggestions loops, efficiency critiques, and extra. It is a cross-functional effort that spans information science, safety, compliance, design, human assets, and the tip customers who use the system each day.

Position definition. It particulars scopes, inputs/outputs, escalation paths, and acceptable failure modes. For instance, a authorized co-pilot can summarize contracts and floor harmful clauses, however should keep away from last authorized judgments and escalate edge instances.

Coaching in accordance with the scenario. Though it requires some fine-tuning, search extension technology (RAG) and power adapters are safer, cheaper, and auditable for a lot of groups. RAG maintains a mannequin primarily based on the most recent vetted information (paperwork, insurance policies, information bases) to scale back illusions and enhance traceability. The brand new Mannequin Context Protocol (MCP) integration makes it simple to attach CoPilot to enterprise programs in a managed manner, bridging fashions with instruments and information whereas sustaining separation of considerations. Salesforce’s Einstein Belief Layer reveals how distributors are formalizing safe grounding, masking, and audit controls for enterprise AI.

Simulation earlier than manufacturing. Do not do the preliminary “coaching” of your AI on actual prospects. Construct a high-fidelity sandbox, stress take a look at tone, inference, and edge instances, and consider with human graders. Morgan Stanley constructed an analysis plan for the GPT-4 Assistant, with advisors and prompting engineers evaluating responses and refining prompts earlier than widespread deployment. Outcomes: As soon as high quality standards have been met, there was over 98% adoption among the many advisor group. Distributors are additionally transferring towards simulation, with Salesforce not too long ago emphasizing digital twin testing to soundly rehearse brokers for sensible eventualities.

4) Cross-functional mentorship. Deal with early utilization as a two-way studying loop. Area specialists and front-line customers present suggestions on tone, accuracy, and usefulness. Safety and compliance groups implement perimeters and redlines. Designers form frictionless UIs that encourage acceptable utilization.

Suggestions loops and efficiency critiques ceaselessly

Onboarding would not finish with go stay. Probably the most significant studying begins after implementation.

Monitoring and observability: Log output, observe KPIs (accuracy, satisfaction, escalation fee), and monitor degradation. Cloud suppliers now supply observability/analysis instruments that enable groups to detect drift and regression in manufacturing, particularly in RAG programs the place information adjustments over time.

Consumer suggestions channels. Supplies in-product flagging and structured evaluate queues to permit people to educate fashions. Then enter these alerts right into a immediate, RAG supply, or tweak set to shut the loop.

Common audits. Schedule coordination checks, truth audits, and security assessments. For instance, Microsoft’s Accountable AI Playbook for Enterprise emphasizes governance and gradual deployment with govt visibility and clear guardrails.

Mannequin succession planning. As legal guidelines, merchandise, and fashions evolve, plan for upgrades and retirements the identical manner you propose for folks’s transitions. Run redundant assessments and port your organizational information (prompts, evaluation units, search sources).

Why is that this pressing now?

Gen AI is now not an “innovation shelf” undertaking, however is embedded in CRMs, assist desks, analytical pipelines, and govt workflows. Banks like Morgan Stanley and Financial institution of America are focusing AI on inside co-pilot use instances to enhance worker effectivity whereas limiting customer-facing dangers, and their strategy depends on structured onboarding and cautious scoping. In the meantime, safety leaders say that though generational AI is ubiquitous, one-third of adopters would not have primary threat mitigation in place, a niche that’s resulting in shadow AI and information breaches.

AI-native staff anticipate extra: transparency, traceability, and the power to form the instruments they use. Organizations that ship this by coaching, clear UX affordances, and responsive product groups obtain sooner adoption and fewer workarounds. As soon as the person trusts the co-pilot, they may use it. If not, bypass it.

As onboarding matures, we anticipate to see extra org charts with AI enablement managers and PromptOps specialists to curate prompts, handle acquisition sources, run evaluation suites, and coordinate cross-functional updates. Microsoft’s inside Copilot deployment demonstrates operational self-discipline: facilities of excellence, governance templates, and govt deployment playbooks. These practitioners are the “lecturers” who hold AI aligned with quickly altering enterprise aims.

Sensible onboarding guidelines

When you’re trying to deploy (or rescue) an Enterprise co-pilot, begin right here.

Write the job description. Scope, enter/output, tone, redline, escalation guidelines.

Floor the mannequin. Implement a RAG (and/or MCP-style adapter) to hook up with privileged access-controlled sources. Choose dynamic grounding over intensive tweaking when doable.

Construct a simulator. Create scripted and seeded eventualities. Measure accuracy, protection, tone, and security. Human approval is required to exit the stage.

A ship with guardrails. DLP, information masking, content material filters, and audit trails (see vendor belief layers and accountable AI requirements).

Instrument suggestions. In-product flagging, analytics, and dashboards. Schedule weekly triage.

Evaluation and retrain. Month-to-month reconciliation checks, quarterly truth audits, and deliberate mannequin upgrades – side-by-side A/B to forestall setbacks.

In a future the place each worker has an AI teammate, organizations that take onboarding significantly will transfer sooner, extra securely, and with better function. Gen AI requires extra than simply information and computing. You want steering, objectives, and a progress plan. Treating AI programs as teachable, improvable, and accountable group members turns hype into routine worth.

Dhyey Mavani accelerates generative AI at LinkedIn.