Over the previous few years, immediate engineering has grow to be the key handshake of the AI world. Utilizing the best phrasing could make your mannequin sound poetic, humorous, and insightful. If I selected the fallacious one, I ended up trying flat and robotic. However a brand new Stanford-led paper argues that a lot of this “approach” compensates for one thing deeper: hidden biases in the best way these methods had been educated.

Their argument is straightforward. Modeling has by no means been boring. They’ve been educated to behave that manner.

And the proposed resolution, known as verbalized sampling, could do extra than simply change the best way you immediate your mannequin. It has the potential to rewrite the best way we take into consideration coordination and creativity in AI.

Central challenge: Alignment makes AI predictable

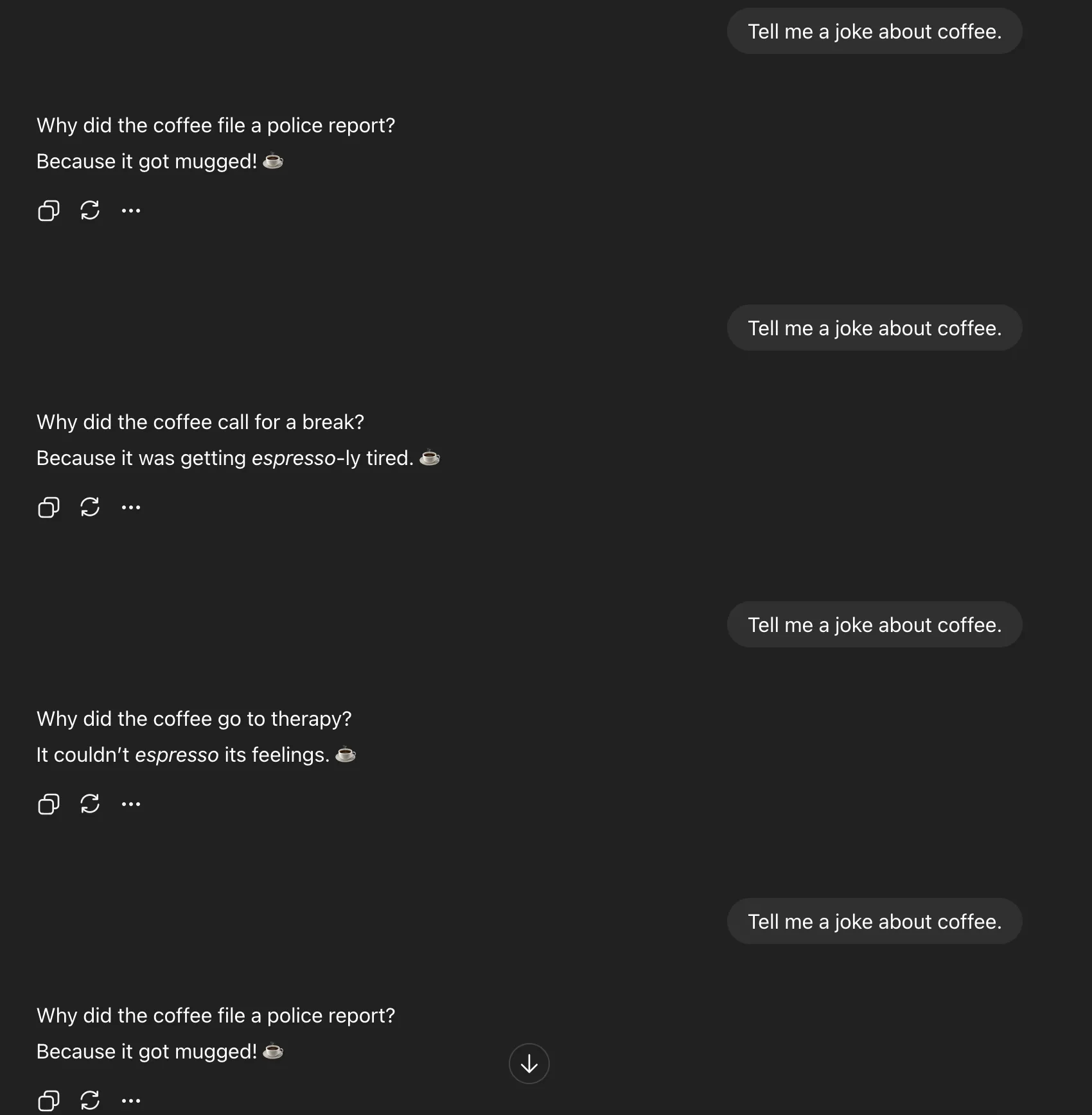

To grasp this breakthrough, begin with a easy experiment. Ask the AI mannequin “c” and run it 5 instances. I nearly at all times get the identical response.

This isn’t laziness. It’s mode collapse, which narrows the output distribution of the mannequin after alignment coaching. As an alternative of exploring all doable legitimate responses that may very well be generated, the mannequin gravitates in direction of the most secure and most common responses.

A crew at Stanford College recognized this as a typicality bias within the human suggestions information used throughout reinforcement studying. Annotators persistently choose textual content that sounds acquainted when judging a mannequin’s response. Over time, a reward mannequin educated on that desire will study to reward regular issues reasonably than novelty.

Mathematically, this bias provides a “typicality weight” (α) to the reward operate, amplifying what seems to be statistically most common. The rationale most tuned fashions sound the identical is as a result of creativity is slowly being squeezed out.

Twist: Creativity was by no means misplaced

The necessary level right here is that variety hasn’t disappeared. It is buried.

Requiring a single response forces the mannequin to decide on the almost definitely completion. However if you ask somebody to verbalize a number of solutions together with their possibilities, the inner distribution, the vary of concepts that they really “know,” out of the blue opens up.

That is the follow of verbalized sampling (VS).

as an alternative of:

Inform me a joke about espresso

You ask:

Generate 5 espresso jokes with possibilities

This small change frees up the variety that was compressed by alignment coaching. There isn’t any retraining the mannequin, altering temperatures, or hacking sampling parameters. We’re simply asking the mannequin to indicate uncertainty reasonably than disguise it.

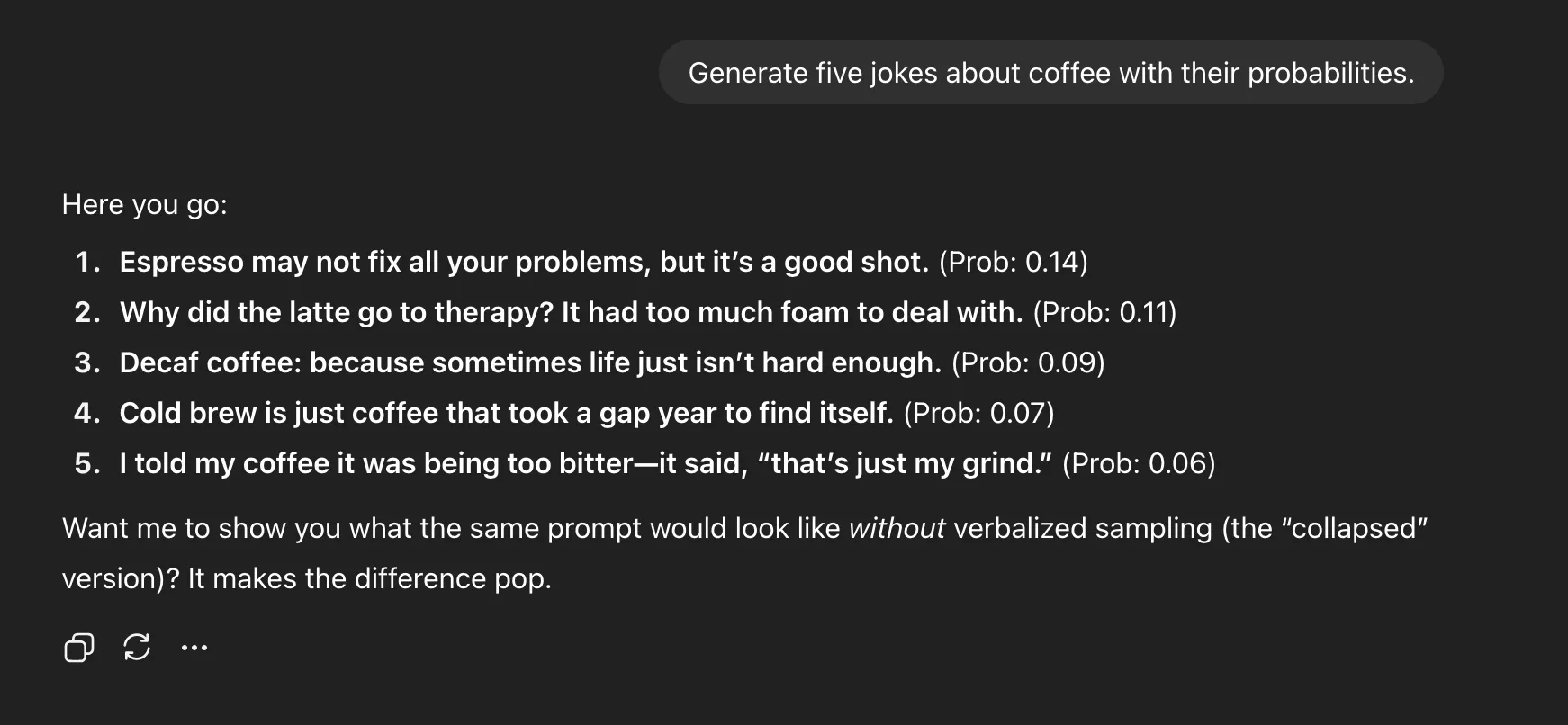

Espresso prompts: sensible proof

To show, the researchers ran the identical espresso joke immediate utilizing each conventional prompts and verbalized sampling.

direct immediate

Verbalized sampling

why it really works

Throughout technology, the language mannequin internally samples tokens from a likelihood distribution, however usually solely the highest selections are displayed. If you ask it to output a number of candidates with possibilities, you might be explicitly reasoning about its personal uncertainty.

This “self-verbalization” reveals the underlying variety of the mannequin. Fairly than collapsing right into a single high-probability mode, a number of believable modes seem.

In follow, which means that “Inform Me a Joke” will generate one theft pun, whereas “Generate 5 Jokes with Likelihood” will generate espresso puns, remedy jokes, chilly brew traces, and so forth. It isn’t simply variety, it is interpretability. You possibly can see what the mannequin thinks works.

information and revenue

The outcomes had been constant throughout a number of benchmarks, inventive writing, interplay simulation, and free-form QA.

1.6-2.1x improve in variety in inventive writing duties 66.8% restoration in pre-alignment variety No discount in factual accuracy or security (>97% rejection price)

Bigger fashions provide even better advantages. GPT-4 class methods confirmed a two-fold improve in variety in comparison with smaller methods. This means that enormous fashions have deep latent creativity ready to be accessed.

prejudice behind all the pieces

To substantiate that typicality bias does certainly trigger mode collapse, the researchers analyzed practically 7,000 response pairs from the HelpSteer dataset. Human annotators most well-liked the “typical” reply about 17-19% extra typically, even when each had been equally right.

They modeled this as follows.

r(x, y) = r_true(x, y) + α log π_ref(y | x)

The α time period is the representativeness bias weight. As α will increase, the distribution of the mannequin sharpens and is pushed towards the middle. This makes your responses protected, predictable, and repetitive over time.

What does that imply for Immediate Engineering?

So, is immediate engineering over? Not fully. But it surely’s evolving.

Verbalized sampling doesn’t remove the necessity for considerate prompts. The look of intelligent prompts adjustments. The brand new recreation is just not about tricking fashions to unleash creativity. It is about designing meta-prompts that expose the entire likelihood house.

It will also be handled as a “creativity dial.” Set likelihood thresholds to regulate how wild or protected the responses are. Decrease it for extra shock or larger for stability.

true that means

The most important change right here is not in regards to the jokes or the story. It is about rebuilding the alignment itself.

For years we’ve accepted that alignment makes fashions safer however bland. This examine suggests in any other case. The adjustment made them too well mannered and unbreakable. Completely different prompts help you regain your creativity with out weighing down your mannequin.

This has implications far past inventive writing, from extra real looking social simulations to richer artificial information for mannequin coaching. It suggests a brand new form of AI system. It’s a system that may self-reflect by itself uncertainties and supply a number of believable solutions reasonably than pretending there is just one.

Precautions

Not everybody buys the hype. Critics level out that some fashions can create an phantasm of likelihood scores reasonably than reflecting true chance. Some argue that this doesn’t right underlying human biases, however solely circumvents them.

And whereas outcomes seem good in managed testing, real-world deployment entails trade-offs in price, delay, and interpretability. “If it labored completely, OpenAI would already be doing it,” one researcher stated dryly of X.

Nonetheless, I am unable to assist however admire its magnificence. There isn’t any retraining, no new information, only one revised instruction.

Generate 5 responses with possibilities.

conclusion

The teachings from Stanford’s analysis are extra necessary than any single expertise. The mannequin we constructed was something however imaginative. They had been overly aligned and educated to suppress the variety that made them highly effective.

Verbalized sampling doesn’t rewrite them. Simply return the important thing.

If pre-training has constructed up an enormous inner library, alignment will lock a lot of the doorways. VS is a technique that asks you to see all 5 variations of the reality.

Immediate engineering is not completed but. It is lastly turning into a science.

FAQ

A. Verbalized sampling is a prompting approach that asks an AI mannequin to generate a number of responses with likelihood, revealing inner variety with out retraining or fine-tuning parameters.

A. Human suggestions information has typicality bias, so the mannequin learns to choose protected, acquainted responses, resulting in mode collapse and lack of inventive variety.

A. No, redefine it. The brand new ability lies in creating meta-prompts that expose distribution and management creativity, reasonably than tweaking one-shot phrasing.

Log in to proceed studying and revel in content material hand-picked by our consultants.

Proceed studying free of charge