introduction

Tencent’s Hunyuan staff has launched the Hunyuan-MT-7B (translation mannequin) and the Hunyuan-MT-Chimera-7B (ensemble mannequin). Each fashions are designed particularly for multilingual machine translation and had been launched along side Tencent’s participation within the WMT2025 widespread machine translation sharing activity.

Mannequin overview

Hunyuan-MT-7B

7B parameter conversion mannequin. It helps inter-translation throughout 33 languages, together with Chinese language ethnic minority languages comparable to Tibet, Mongolian, Uyghur and Kazakhs. Optimized for each excessive and low useful resource translation duties, attaining cutting-edge outcomes between fashions of comparable sizes.

Hunyuan-Mt-chimera-7b

Built-in weak fusion mannequin. Combines a number of translation outputs into inference occasions to generate refined translations utilizing augmented studying and aggregation strategies. It represents the primary open supply translation mannequin of this sort, bettering translation high quality past the output of a single system.

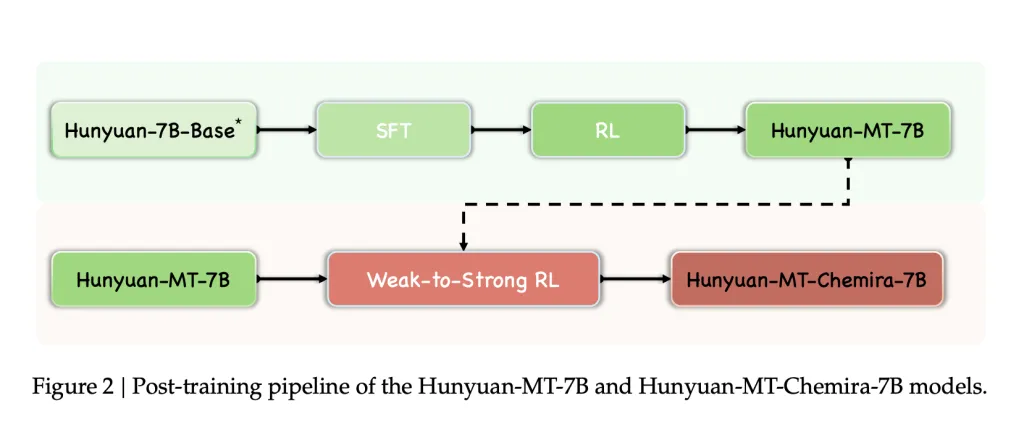

Coaching Framework

The fashions had been skilled utilizing a five-stage framework designed for translation duties.

Common coaching 1.3 trillion tokens masking 112 languages and dialects. A multilingual corpus evaluated for the worth of information, reliability, and writing type. Range maintained by way of disciplinary, trade and theme tagging techniques. MC4 and Oscar’s MT-oriented monolingual corpus are filtered utilizing FastText (Language ID), Minlsh (Deduplication), and Kenlm (Perplexity filtering). Parallel corpus of Opus and Paracrawl, filtered by way of Cometkiwi. Replay of normal pre-training knowledge (20%) to keep away from catastrophic forgetting. Supervised Tremendous Tuning (SFT) Stage I: ~3M Parallel Pairs (Flores-200, WMT Take a look at Set, Curated Mandarin Minority Knowledge, Artificial Pairs, Instruction Adjustment Knowledge). Stage II: Prime quality pairs ~268k pairs chosen by way of computerized scoring (Cometkiwi, Gemba) and guide verification. Reinforcement Studying (RL) Algorithm: GRPO. Reward options: Scoring high quality Xcomet-XXL and DeepSeek-V3-0324. Reward to awaken the time period (TAT-R1). Repeated penalties to keep away from degenerating output. A number of candidate outputs generated and aggregated by way of reward-based outputs utilized to hunyuan-Mt-chimera-7b generate and mixture RL candidate outputs with weak RL candidate outputs, bettering translation robustness and lowering repeat errors.

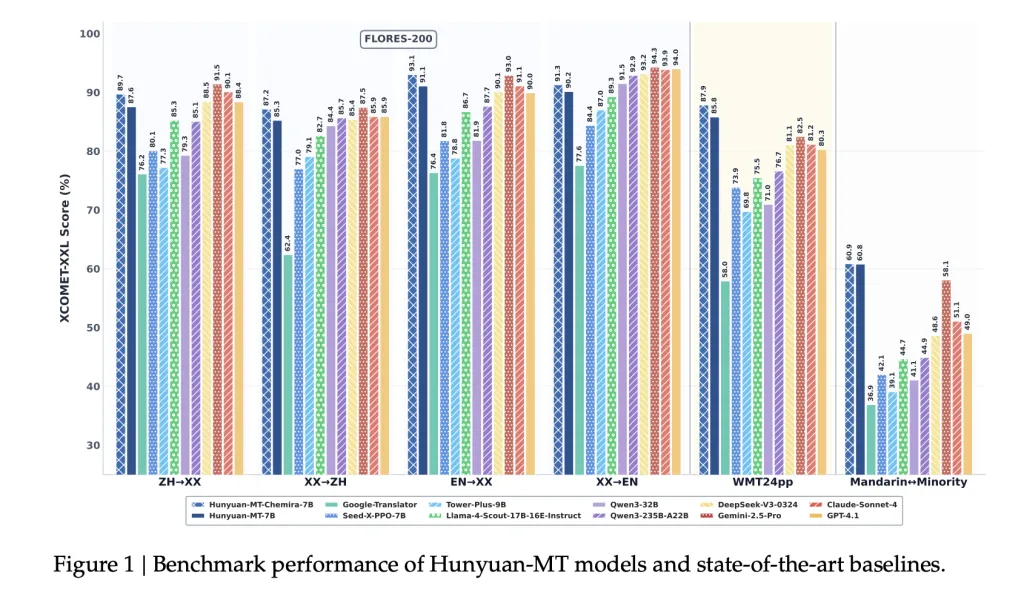

Benchmark outcomes

Automated analysis

WMT24PP (English⇔XX): The Hunyuan-MT-7B achieved 0.8585 (Xcomet-XXL), surpassing bigger fashions such because the Gemini-2.5-Professional (0.8250) and Claude-Soononnet-4 (0.8120). Flores-200 (33 languages, 1056 pairs): Hunyuan-MT-7B scored 0.8758 (Xcomet-XXL), surpassing the open supply baseline, together with QWEN3-32B (0.7933). Mandarin⇔Minority Language: It achieved a better 0.6082 (Xcomet-XXL) than Gemini-2.5-Professional (0.5811), displaying a major enchancment in low useful resource configuration.

Comparability outcomes

It outperforms Google Translator by 15-65% throughout ranking classes. Regardless of the less parameters, it outperforms particular translation fashions such because the Tower-Plus-9B and Seed-X-PPO-7B. Chimera-7B provides about 2.3% enchancment to Flores-200, particularly in Chinese language and non-English translations.

Human analysis

We in contrast customized evaluation units (masking social, medical, authorized and web domains) that in contrast the Hunyuan-MT-7B with cutting-edge fashions.

Hunyuan-MT-7B: common 3.189 gemini-2.5-pro: avg. 3.223 Deepseek-V3: Common. 3.219 Google Translation: Common. 2.344

This reveals that the Hunyuan-MT-7B, though small at 7B parameters, approaches the standard of a a lot bigger proprietary mannequin.

Case research

The report highlights some real-world circumstances.

Cultural Reference: In contrast to Google Translate’s “candy potato,” “candy potato” is appropriately translated as a platform “renote”. Idiom: It’s interpreted as “You are killing me” and “You really want to know, I am useless” (to precise leisure), and keep away from literal misunderstandings. Medical time period: precisely interprets “uric acid kidney stones” and baseline produces malformation output. Minority Language: For Kazakh and Tibet, Hunyuan-MT-7B generates coherent translations, inflicting baseline failures and outputs meaningless textual content. Chimera Enhancement: Provides enhancements to recreation jargon, reinforcement and sports activities terminology.

Conclusion

Tencent’s releases of Hunyuan-MT-7B and Hunyuan-MT-Chimera-7B set up new requirements for open supply translation. By combining a rigorously designed coaching framework with a spotlight specialised for low assets and minority language translation, the mannequin achieves high quality comparable or exceeding that of a big, closed-source system. With the launch of those two fashions, the AI analysis group gives accessible, high-performance instruments for multilingual translation analysis and deployment.

Try the papers, github pages and fashions. All credit for this examine might be directed to researchers on this challenge. For tutorials, code and notebooks, please go to our GitHub web page. Additionally, be happy to observe us on Twitter. Do not forget to affix 100K+ ML SubredDit and subscribe to our publication.

Asif Razzaq is CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, ASIF is dedicated to leveraging the chances of synthetic intelligence for social advantages. His newest efforts are the launch of MarkTechPost, a synthetic intelligence media platform. That is distinguished by its detailed protection of machine studying and deep studying information, and is simple to know by a technically sound and extensive viewers. The platform has over 2 million views every month, indicating its recognition amongst viewers.