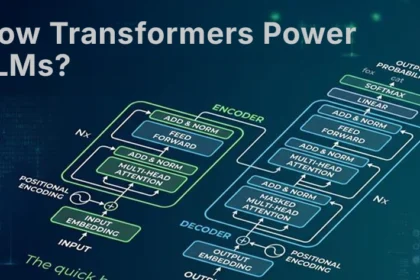

How Transformers Power LLMs: An Intuitive Step-by-Step Guide

Transformers energy trendy NLP methods, changing earlier RNN and LSTM approaches. Their…

LeCun’s world models vs LLM’s empire

In a daring problem to the dominant trajectory of synthetic intelligence, Yann…

Testing LLMs on superconductivity research questions

conclusion A number of bigger conclusions may be drawn from this take…

Teaching LLMs to reason like Bayesians

Evaluating the Bayesian performance of LLM Just like people, for LLM consumer…

Why observable AI is the missing SRE layer enterprises need for reliable LLMs

As soon as AI programs are deployed into manufacturing, belief and governance…

AI Interview Series #1: Explain Some LLM Text Generation Strategies Used in LLMs

Every time you immediate LLM, it constructs the response one phrase (or…

Simplifying Data Integration for Long-Context LLMs

Massive Language Fashions (LLMs) like Anthropic’s Claude have unlocked large context home…

New AI architecture delivers 100x faster reasoning than LLMs with just 1,000 training examples

Want smarter insights in your inbox? Join our weekly publication to get…

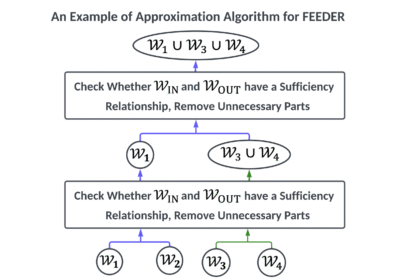

FEEDER: A Pre-Selection Framework for Efficient Demonstration Selection in LLMs

LLMS demonstrates distinctive efficiency throughout a number of duties by using a…