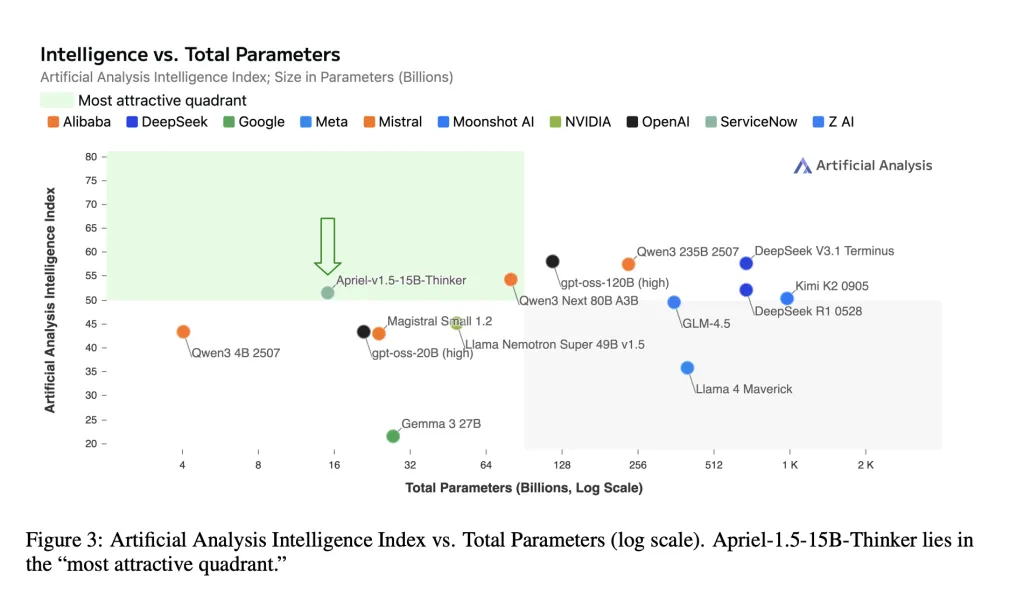

ServiceNow AI Analysis Lab has launched Apriel-1.5-15B-Thinker, an open-weight multimodal inference mannequin with 15 billion parameters skilled with data-centric mid-training recipes. This mannequin has a man-made evaluation intelligence index rating of 52, with an eight-fold discount in value in comparison with SOTA. Checkpoints shall be shipped beneath a face-hugging MIT license.

So, what’s new to me?

Small frontier degree mixed scores. This mannequin experiences a man-made evaluation intelligence index (AAI) = 52, dramatically decreasing deepseek-r1-0528 with its complete metric. AAI aggregates 10 third-party rankings (MMLU-Professional, GPQA Diamond, The Final Check of Humanity, LiveCodebench, Scicode, AIME 2025, IFBENCH, AA-LCR, Terminal Bench Onerous, and τ² Bench Telecom). Single GPU deployment. The mannequin card states that the 15B checkpoints “match for a single GPU” and targets on-premises and air hole deployments with mounted reminiscence and latency budgets. Open weights and reproducible pipelines. Weights, coaching recipes, and analysis protocols are printed for impartial verification.

obtained it! I obtained it, however what’s the coaching mechanism?

Base and upscaling. APRIEL-1.5-15B-Thinker begins with Mistral’s Pixtral-12B-Base-2409 Multimodal Decoder-Imaginative and prescient stack. The researchers apply depth upscaling (growing decoder layers from 40 to 48), reorganize the projection community, and align the imaginative and prescient encoder to the enlarged decoder. This avoids pre-gathering from scratch whereas sustaining the deployment of a single GPU.

CPT (Steady Pretraining). Two phases: (1) Mixing textual content + Construct fundamental inference of picture information and understanding of paperwork/diagrams. (2) Focused artificial visible duties (reconstruction, matching, detection, counting) to cut back spatial and assemble inference. The sequence size is prolonged to 32K and 16K tokens, respectively, and locations selective losses in pattern response tokens in instruction format.

🚨 [Recommended Read] Vipe (Video Pause Engine): A robust and versatile 3D video annotation software for spatial AI

SFT (monitored adjustment). Prime quality inference and tracing instruction information for arithmetic, coding, science, instruments use, and subsequent directions. Two further SFT runs (a stratified subset, lengthy context) are coated with a weight mer to type a remaining checkpoint. There isn’t a RL (reinforcement studying) or RLAIF (reinforcement studying from AI suggestions).

Knowledge notes. Roughly 25% of the prolonged textual content mixture of depth comes from Nvidia’s Nemotron assortment.

O ‘Wow! Are you able to inform us concerning the end result?

Key textual content benchmark (Path@1/precision).

AIME 2025 (American Invitational Arithmetic Examination 2025): 87.5–88% GPQA Diamond (Google-Proof Fustosh Questions, Diamond Cut up): ≈71% IFBench (Benchmark beneath educating): ~62τ² Bench (Tau-Squared Bench) Telecom: ~68 LibecodeBench (~68 LibecodeBench)

For reproducibility utilizing vlmevalkit, APRIEL competes for aggressive scores with robust ends in MMMU/MMMU-PRO (Giant Multi-Desired Line Multimodal Understanding), LogicVista, Mathvision, Mathvista, Mathverse, MMStar, Charxiv, AI2D, flashing, doc and textual arithmetic pictures.

Let’s sum all of it up

APRIEL-1.5-15B-Thinker reveals that cautious intermediate coaching (steady pre-training + monitored fine-tuning, no reinforcement studying) can present 52 for the Synthetic Analytic Intelligence Index (AAI) whereas being deployed to a single graphics processing unit. Reported task-level scores (e.g., AIME2025≈88, GPQA Diamond≈71, Ifbench≈62, Tau-squared BenchTelecom≈68) match the mannequin card and place 15 billion parameter checkpoints in probably the most cost-effective band of present open weight inference. For companies, the mix (open weights, reproducible recipes, single GPU latency) makes use of a sensible baseline to guage earlier than contemplating a bigger closed system.

Asif Razzaq is CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, ASIF is dedicated to leveraging the probabilities of synthetic intelligence for social advantages. His newest efforts are the launch of MarkTechPost, a man-made intelligence media platform. That is distinguished by its detailed protection of machine studying and deep studying information, and is straightforward to know by a technically sound and extensive viewers. The platform has over 2 million views every month, indicating its recognition amongst viewers.

🔥[Recommended Read] Nvidia AI Open-Sources Vipe (Video Pause Engine): A robust and versatile 3D video annotation software for spatial AI