The Qwen staff has launched Qwen3-Coder-Subsequent, an openweight language mannequin designed for coding brokers and native growth. This sits on high of the Qwen3-Subsequent-80B-A3B spine. This mannequin makes use of a sparse combination of specialists (MoE) structure with hybrid consideration. There are 80B whole parameters, however solely 30B parameters are legitimate per token. The objective is to match the efficiency of a lot bigger lively fashions whereas conserving the inference prices low for lengthy coding classes and agent workflows.

This mannequin is positioned for agent coding, browser-based instruments, and IDE copilots fairly than easy code completion. Qwen3-Coder-Subsequent is educated utilizing a big corpus of executable duties and reinforcement studying, permitting for long-term planning, invoking instruments, executing code, and recovering from runtime failures.

Structure: Hybrid Consideration Plus Sparse MoE

The analysis staff describes this as a hybrid structure that mixes Gated DeltaNet, Gated Attendee, and MoE.

The principle configuration factors are:

Sort: Causal language mannequin, pre- and post-training. Parameters: 80B whole, 79B non-embedded. Lively parameters: 3B per token. Variety of layers: 48. Hidden Dimension: 2048. Format: 3 × (Gated Deltanet → MoE) adopted by 1 × (Gated Consideration → MoE) 12 instances.

The Gated Attendant block makes use of 16 question heads with head dimension 256 and two key-value heads with rotated place embeddings of dimension 64. The Gated DeltaNet block makes use of 32 linear consideration heads for values and 16 linear consideration heads for queries and keys with head dimension 128.

The MoE layer has 512 specialists, with 10 specialists and 1 shared knowledgeable activated for every token. Every knowledgeable makes use of 512 intermediate dimensions. This design offers highly effective capability for specialization whereas conserving lively computing near the footprint of 3B’s dense fashions.

Agent coaching: executable duties and RL

The Qwen staff describes Qwen3-Coder-Subsequent as being “agentically educated at scale” on high of Qwen3-Subsequent-80B-A3B-Base. The coaching pipeline makes use of large-scale synthesis of executable duties, interplay with the setting, and reinforcement studying.

We spotlight roughly 800,000 verifiable duties with executable environments used throughout coaching. These duties present concrete indicators for long-term inference, sequencing of instruments, take a look at execution, and restoration from failed runs. This works with a SWE-Bench model workflow fairly than pure static code modeling.

Benchmarks: SWE Bench, Terminal Bench, and Aider

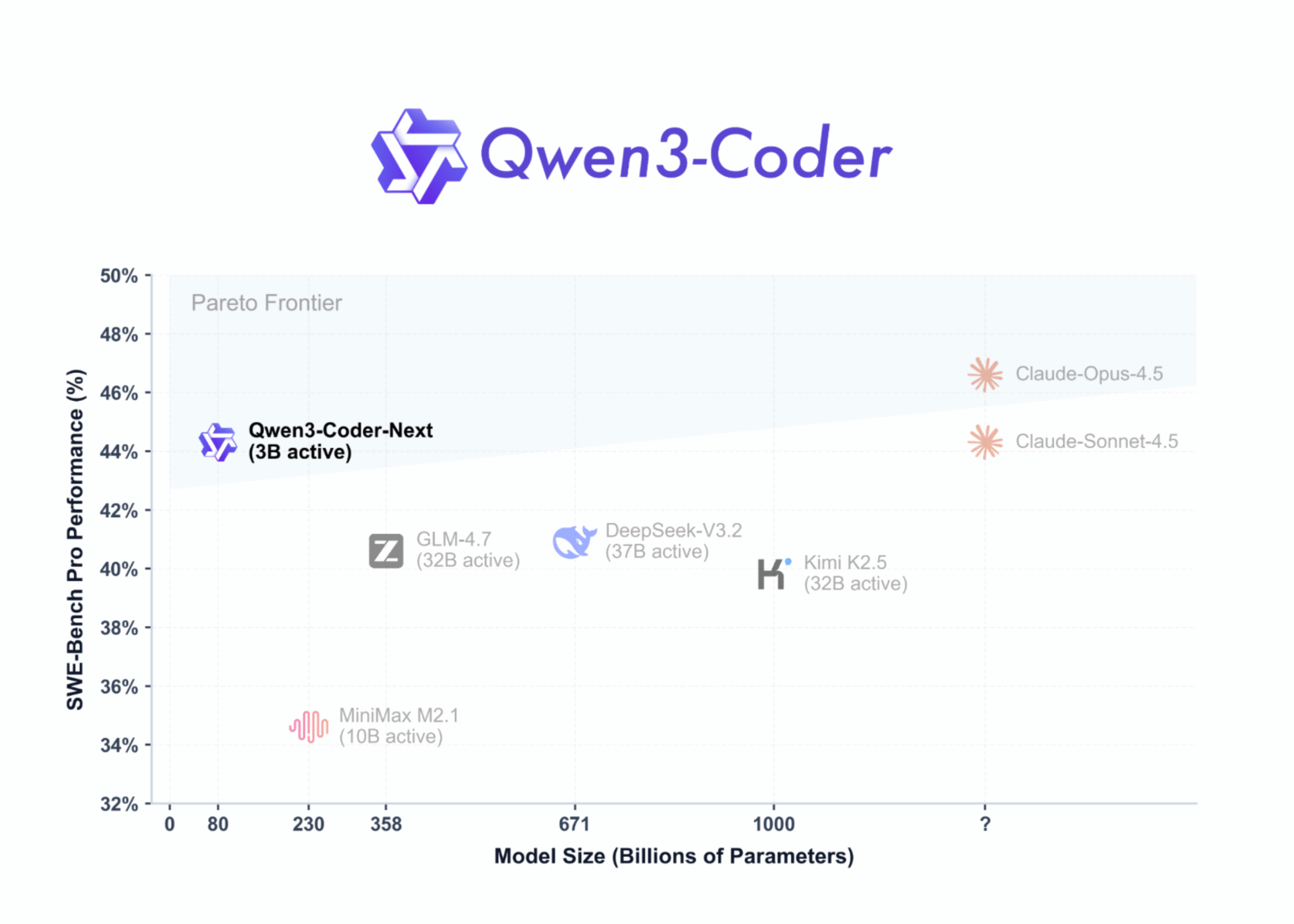

In SWE-Bench Verified utilizing SWE-Agent scaffold, Qwen3-Coder-Subsequent has a rating of 70.6. The rating of 671B parameters of DeepSeek-V3.2 is 70.2, and the rating of 358B parameters of GLM-4.7 is 74.2. On SWE-Bench Multilingual, Qwen3-Coder-Subsequent reaches 62.8, which may be very near DeepSeek-V3.2’s 62.3 and GLM-4.7’s 63.7. On the harder SWE-Bench Professional, Qwen3-Coder-Subsequent scores 44.3, beating DeepSeek-V3.2’s 40.9 and GLM-4.7’s 40.6.

On Terminal-Bench 2.0 with Terminus-2 JSON scaffold, Qwen3-Coder-Subsequent has a rating of 36.2, once more aggressive with large-scale fashions. It reached 66.2 on the Aider benchmark, which is near the perfect fashions in its class.

These outcomes assist the Qwen staff’s declare that Qwen3-Coder-Subsequent achieves efficiency akin to fashions with 10-20 instances extra lively parameters, particularly in coding and agent settings.

Utilizing instruments and integrating brokers

Qwen3-Coder-Subsequent is tailor-made for device invocation and integration with coding brokers. This mannequin is designed to plug into IDE and CLI environments equivalent to Qwen-Code, Claude-Code, Cline, and different agent entrance ends. 256K contexts enable these programs to keep up massive codebases, logs, and conversations in a single session.

Qwen3-Coder-Subsequent solely helps non-thinking mode. Each the official mannequin card and the Unsloth documentation emphasize that it doesn’t generate blocks. This simplifies the mixing of brokers that already assume direct device calls and responses with out hidden inference segments.

Introducing: SGLang, vLLM, and native GGUF

For server deployment, the Qwen staff recommends SGLang and vLLM. For SGLang, the person runs sglang>=0.5.8 with –tool-call-parser qwen3_coder and the default context size of 256K tokens. For vLLM, customers run vllm>=0.15.0 utilizing the identical device parser as –enable-auto-tool-choice. Each setups expose an OpenAI-compatible /v1 endpoint.

For native deployment, Unsloth offers GGUF quantization for Qwen3-Coder-Subsequent and an entire llama.cpp and llama-server workflow. The 4-bit quantization variant requires roughly 46 GB of RAM or unified reminiscence, whereas the 8-bit requires roughly 85 GB. The Unsloth information recommends a most context dimension of 262,144 tokens, with 32,768 tokens as a sensible default for small machines.

The Unsloth information additionally reveals methods to hook Qwen3-Coder-Subsequent into an area agent that emulates OpenAI Codex and Claude Code. These examples depend on llama-server with an OpenAI-compatible interface and reuse the agent immediate template whereas swapping the mannequin title to Qwen3-Coder-Subsequent.

Essential factors

MoE structure with much less lively compute: Qwen3-Coder-Subsequent has a complete of 80B parameters in a sparse MoE design, however solely 30B parameters are lively per token. This reduces inference prices whereas sustaining excessive processing energy for specialised specialists. Hybrid consideration stack for long-term coding: This mannequin makes use of a hybrid structure of gated deltanets, gated consideration, and MoE blocks throughout 48 layers with a hidden dimension of 2048, and is optimized for long-term inference in code modifying and agent workflows. Agent coaching with executable duties and RL: Qwen3-Coder-Subsequent is educated with large-scale executable duties and reinforcement studying on Qwen3-Subsequent-80B-A3B-Base, so you possibly can transcend finishing brief code snippets to planning, calling instruments, operating assessments, and recovering from failures. Aggressive efficiency on SWE-Bench and Terminal-Bench: Benchmarks present that Qwen3-Coder-Subsequent reaches excessive scores on SWE-Bench Verified, SWE-Bench Professional, SWE-Bench Multilingual, Terminal-Bench 2.0, and Aider, usually matching or outperforming a lot bigger MoE fashions with 10-20 instances extra lively parameters. Sensible deployment for agent and native use: The mannequin helps 256K contexts, non-thinking mode, OpenAI-compatible APIs through SGLang and vLLM, GGUF quantization in llama.cpp, and is appropriate for IDE brokers, CLI instruments, and native personal coding copilots in Apache-2.0.

Verify paper, repository, mannequin weight, and technical particulars. Additionally, be at liberty to observe us on Twitter. Additionally, do not forget to affix the 100,000+ ML SubReddit and subscribe to our publication. grasp on! Are you on telegram? Now you can additionally take part by telegram.