How can groups run trillion-parameter language fashions on current mixed-GPU clusters with out costly new {hardware} or deep vendor lock-in? The analysis staff at Perplexity has launched TransferEngine and the encircling pplx backyard toolkit as an open supply infrastructure for large-scale language mannequin programs. This offers a approach to run fashions with as much as 1 trillion parameters throughout combined GPU clusters with out being tied to a single cloud supplier or buying new GB200-class {hardware}.

The true bottleneck is the community cloth, not FLOP

Trendy deployments of knowledgeable combine fashions equivalent to DeepSeek V3 with 671 billion parameters and Kimi K2 with 1 trillion parameters not match on a single 8 GPU server. The primary constraint is the community cloth between GPUs, because it must span a number of nodes.

The {hardware} state of affairs is fragmented right here. NVIDIA ConnectX 7 sometimes makes use of the Dependable Connection transport, which delivers sequentially. The AWS Elastic Material Adapter makes use of a scalable and dependable datagram transport that’s dependable however defective. Additionally, to succeed in 400 Gbps with one GPU, you might want 4 community adapters at 100 Gbps or two community adapters at 200 Gbps.

Current libraries equivalent to DeepEP, NVSHMEM, MoonCake, and NIXL are typically optimized for one vendor and have decreased or lack of assist for the opposite vendor. The Perplexity analysis staff immediately states of their analysis paper that previous to this work, there was no viable cross-provider resolution for LLM inference.

TransferEngine, a transportable RDMA layer for LLM programs

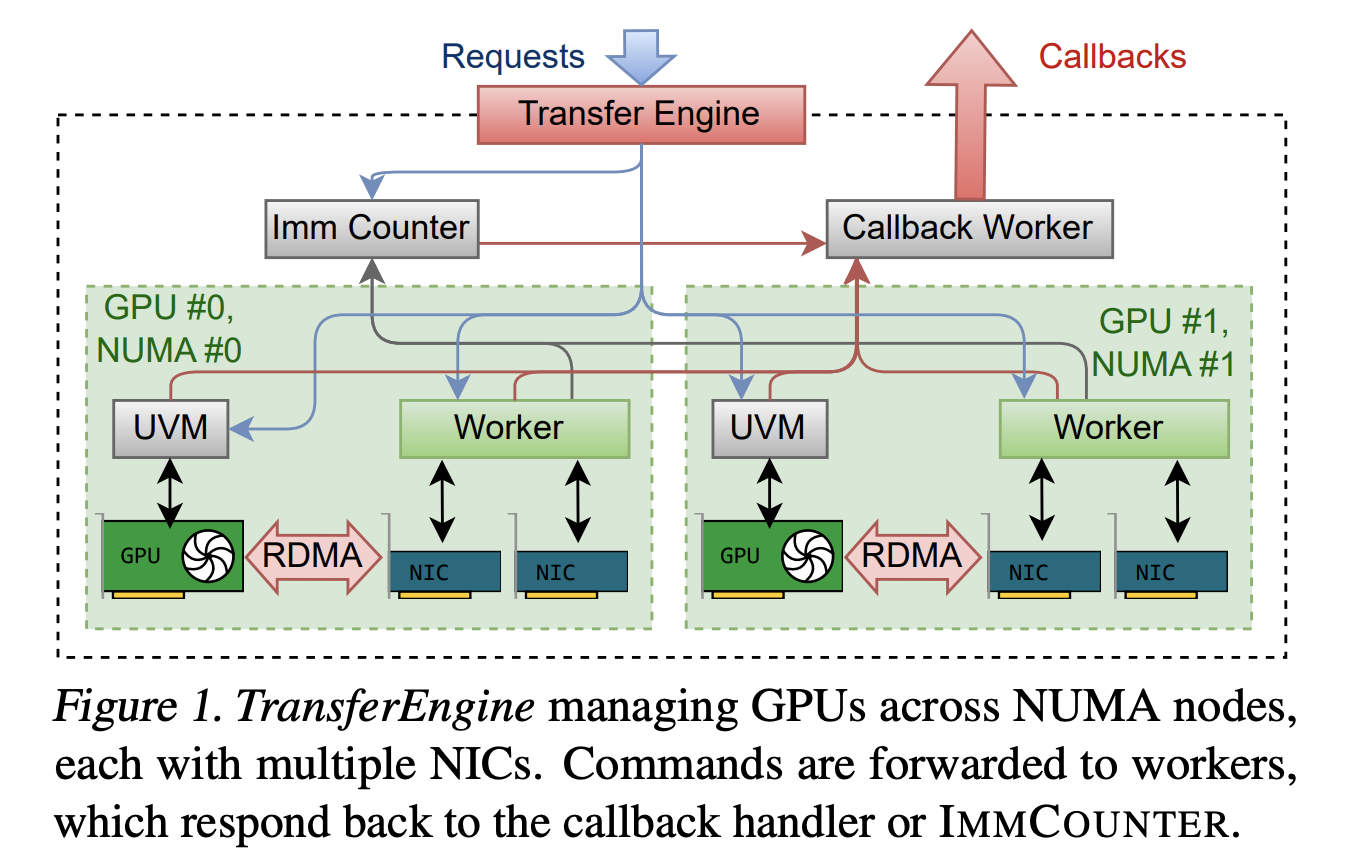

TransferEngine addresses this subject by concentrating on solely the intersection of ensures between community interface controllers. It assumes that the underlying RDMA transport is dependable, however makes no assumptions about message order. Along with this, it exposes a one-sided WriteImm operation and an ImmCounter primitive for completion notification.

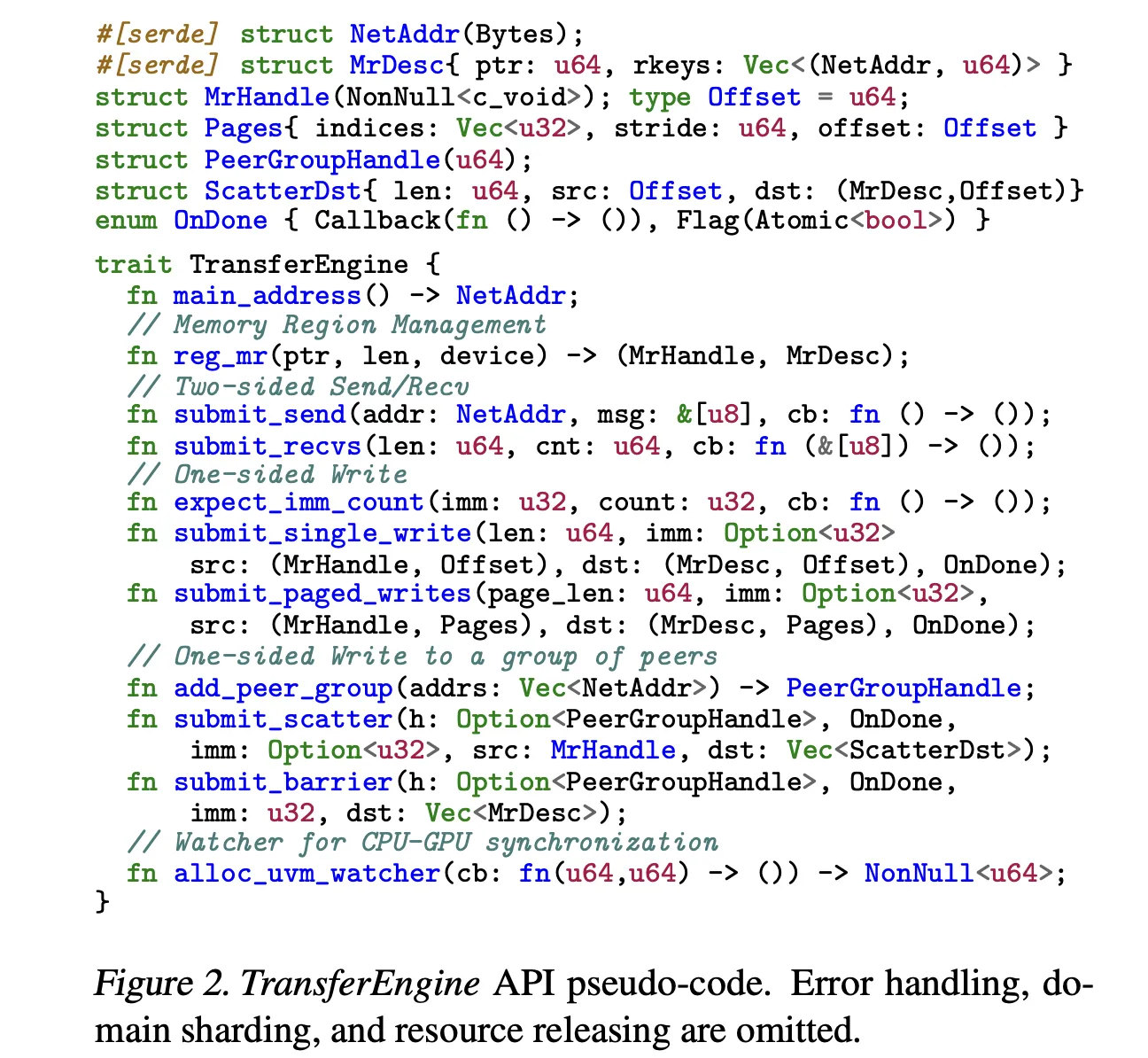

This library offers a minimal API in Rust. It offers two-sided sending and receiving of management messages, and three main single-sided operations: submit_single_write, submit_paged_writes, submit_scatter, in addition to the submit_barrier primitive for synchronization between teams of friends. The NetAddr construction identifies the peer and the MrDesc construction describes the registered reminiscence space. The alloc_uvm_watcher name creates a device-side watcher for CPU-GPU synchronization in superior pipelines.

Internally, TransferEngine spawns one employee thread per GPU and constructs a DomainGroup per GPU that coordinates one to 4 RDMA community interface controllers. A single ConnectX 7 offers 400 Gbps. With EFA, a DomainGroup aggregates 4 community adapters at 100 Gbps or two community adapters at 200 Gbps to succeed in the identical bandwidth. The sharding logic is conscious of all community interface controllers and may cut up transfers between them.

Throughout the {hardware}, the researchers report peak throughput of 400 Gbps with each NVIDIA ConnectX 7 and AWS EFA. That is in line with a single-platform resolution and ensures that the abstraction layer doesn’t go away behind important efficiency.

pplx backyard, open supply package deal

TransferEngine is shipped as a part of the pplx backyard repository on GitHub below the MIT license. The listing construction is easy. Material-lib comprises the RDMA TransferEngine library, p2p-all-to-all implements a mixture of specialists into all kernels, python-ext offers Python extension modules from the Rust core, and python/pplx_garden comprises the Python package deal code.

System necessities replicate the most recent GPU clusters. The Perplexity analysis staff recommends Linux kernel 5.12 or later, CUDA 12.8 or later, libfabric, libibverbs, GDRCopy, and RDMA cloth with GPUDirect RDMA enabled for DMA BUF assist. Every GPU requires at the very least one devoted RDMA community interface controller.

Granular prefill and decoding

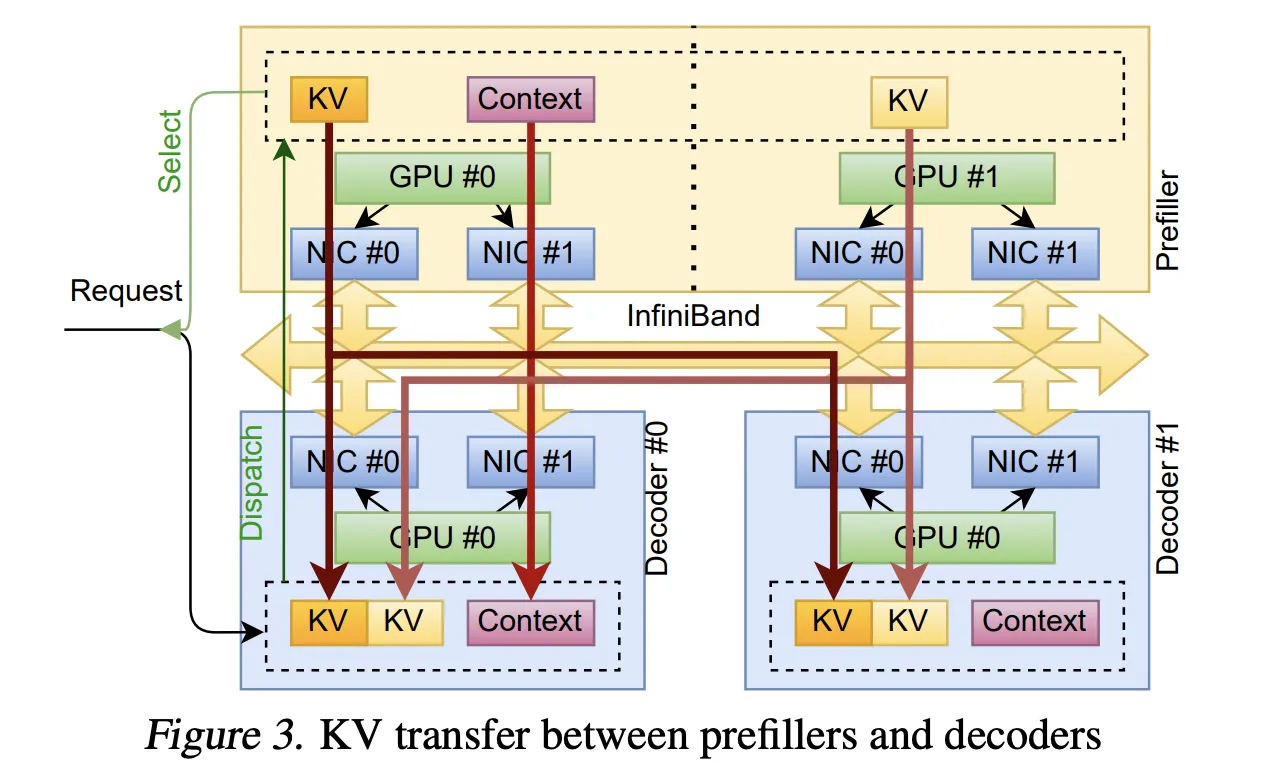

The primary operational use case is decomposed inference. As a result of prefill and decode are carried out on separate clusters, the system should stream KvCache from the prefill GPU for quick GPU decoding.

TransferEngine makes use of alloc_uvm_watcher to trace mannequin progress. Throughout prefill, the mannequin will increase the watcher worth after every layer’s consideration output projection. When a employee detects a change, it points a web page write to the KvCache web page for that layer, after which a single write to the remainder of the context. This method permits layer-by-layer streaming of cache pages with out fixing world membership, and avoids strict ordering constraints on aggregates.

Quick weight switch for reinforcement studying

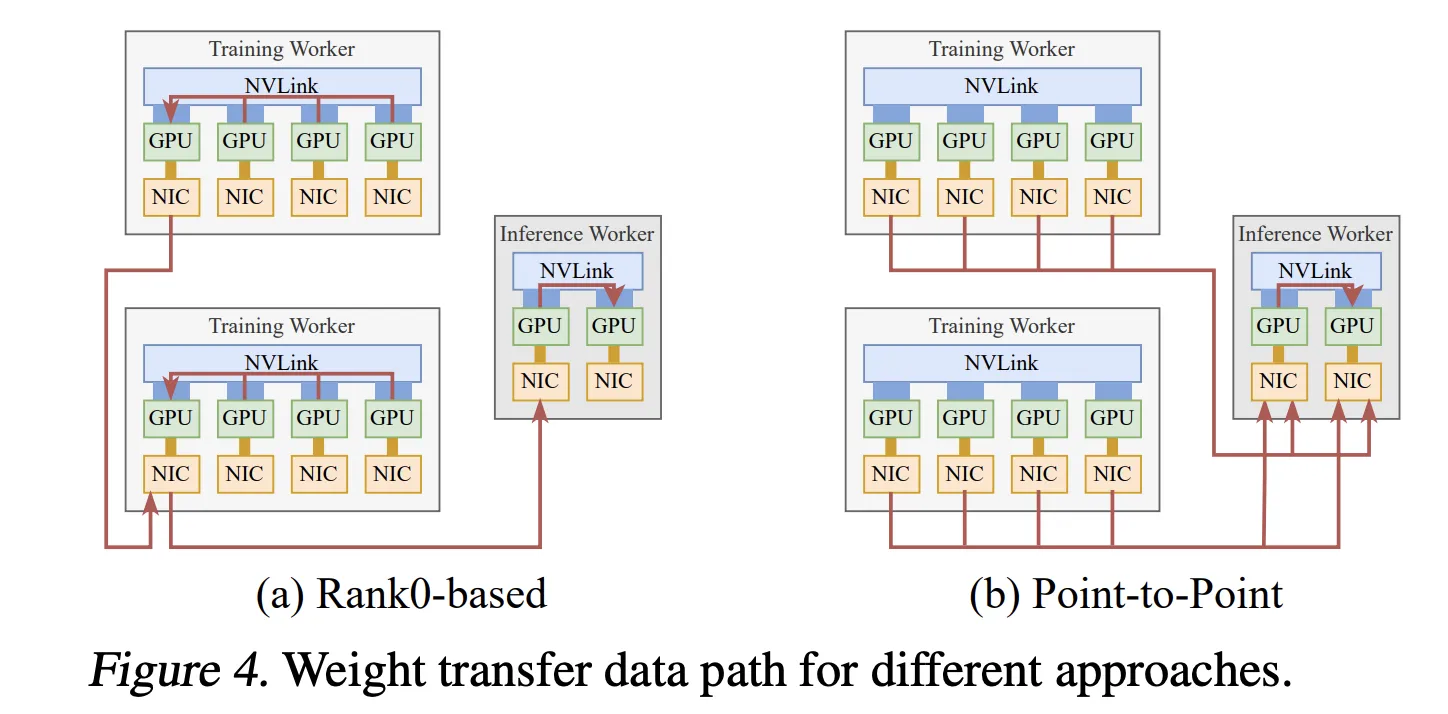

The second system is a fine-tuning of asynchronous reinforcement studying the place coaching and inference are carried out on separate GPU swimming pools. Conventional designs accumulate up to date parameters into one rank after which broadcast them, which limits throughput to a single community interface controller.

The Perplexity analysis staff as an alternative makes use of TransferEngine to carry out point-to-point weight transfers. Every coaching GPU writes its parameter shard on to its corresponding inference GPU utilizing one-sided writes. Pipeline execution splits every tensor into levels and copies it from the host to the machine as totally sharded knowledge parallel offloads weights, performs reconstruction and non-obligatory quantization, RDMA transfers, and obstacles applied by Scatter and ImmCounter.

In manufacturing, this setup offers weight updates for fashions equivalent to Kim K2 with 1 trillion parameters and DeepSeek V3 with 671 billion parameters in about 1.3 seconds from 256 coaching GPUs to 128 inference GPUs.

Mixture of specialists routing between ConnectX and EFA

The third a part of pplx backyard is a mix of point-to-point knowledgeable dispatch and be part of kernels. Use NVLink for intra-node site visitors and RDMA for inter-node site visitors. Dispatch and mix are cut up into separate transmit and obtain phases, permitting the decoder to carry out micro-batch and overlap communication with grouped widespread matrix multiplications.

The host proxy thread polls the GPU standing and calls TransferEngine when the ship buffer is prepared. First, routes are exchanged, after which every rank calculates the successive obtain offset for every knowledgeable and writes tokens to a personal buffer that may be reused between dispatch and be part of. This reduces the reminiscence footprint and maintains sufficient writes to make use of your complete hyperlink bandwidth.

For ConnectX 7, the Perplexity analysis staff reviews state-of-the-art decoding latencies similar to DeepEP throughout knowledgeable counts. With AWS EFA, the identical kernel offers the primary viable MoE decoding latency with larger however sensible values.

Multi-node testing with DeepSeek V3 and Kim K2 on AWS H200 situations reduces latency at medium batch sizes, a typical regime for manufacturing providers, by distributing fashions throughout nodes.

Comparability desk

Essential factors

TransferEngine offers a single RDMA point-to-point abstraction that works with each NVIDIA ConnectX 7 and AWS EFA to transparently handle a number of community interface controllers per GPU. This library makes use of ImmCounter to reveal single-sided WriteImm to realize peak 400 Gbps throughput on each NIC households. This enables it to compete with a single vendor’s stack whereas sustaining portability. The Perplexity staff makes use of TransferEngine on three manufacturing programs, combining disaggregated prefill decoding with KvCache streaming, reinforcement studying weight switch to replace trillion-parameter fashions in about 1.3 seconds, and Combination of Consultants dispatch for large-scale fashions like Kimi K2. With ConnectX 7, pplx backyard’s MoE kernel offers state-of-the-art decoding latency, exceeding DeepEP on the identical {hardware}. In the meantime, EFA delivers the primary sensible MoE latency for multi-trillion parameter workloads. As a result of TransferEngine is open supply below the MIT license and positioned in pplx backyard, groups can run very massive mixture of specialists and dense fashions on heterogeneous H100 or H200 clusters throughout cloud suppliers with out having to rewrite every vendor-specific networking stack.

The discharge of Perplexity’s TransferEngine and pplx backyard is a sensible contribution to LLM infrastructure groups which are hampered by vendor-specific networking stacks and costly cloth upgrades. A transportable RDMA abstraction that reaches peaks of 400 Gbps on each NVIDIA ConnectX 7 and AWS EFA, helps KvCache streaming, quick reinforcement studying weight switch, and knowledgeable combination routing, immediately addressing the trillions of parameter provisioning constraints of real-world programs.

Try our papers and reviews. Be at liberty to go to our GitHub web page for tutorials, code, and notebooks. Additionally, be happy to comply with us on Twitter. Additionally, do not forget to hitch the 100,000+ ML SubReddit and subscribe to our publication. grasp on! Are you on telegram? Now you can additionally take part by telegram.

Asif Razzaq is the CEO of Marktechpost Media Inc. As a visionary entrepreneur and engineer, Asif is dedicated to harnessing the potential of synthetic intelligence for social good. His newest endeavor is the launch of Marktechpost, a man-made intelligence media platform. It stands out for its thorough protection of machine studying and deep studying information, which is technically sound and simply understood by a large viewers. The platform boasts over 2 million views per 30 days, demonstrating its reputation amongst viewers.

🙌 Comply with MARKTECHPOST: Add us as your most well-liked supply on Google.