This weblog put up will give attention to new options and enhancements. For a complete record together with bug fixes, see Launch notes.

Clarifai Inference Engine: Optimized for agentic AI inference

Clarifai Reasoning Engine is a full-stack efficiency framework constructed to ship file inference velocity and effectivity for inference and agent AI workloads.

Not like conventional inference methods that stall after deployment, Clarifai Reasoning Engine repeatedly learns from workload habits and dynamically optimizes kernels, batch processing, and reminiscence utilization. This adaptive strategy means the system turns into sooner and extra environment friendly over time, particularly for repetitive or structured agent duties, with out sacrificing accuracy.

In a latest benchmark with Synthetic Evaluation on GPT-OSS-120B, the Clarifai Reasoning Engine set a brand new business file for GPU inference efficiency.

544 tokens/sec throughput — quickest measured GPU-based inference

Time to first token is 0.36 seconds — near-instantaneous responsiveness

$0.16 per million tokens — lowest mixing value

These outcomes not solely outperformed all different GPU-based inference suppliers, but in addition rivaled specialised ASIC accelerators, proving that fashionable GPUs can obtain related or higher inference efficiency when mixed with optimized kernels.

The design of the Reasoning Engine is mannequin agnostic. Though GPT-OSS-120B served as a benchmark reference, the identical optimizations had been prolonged to different large-scale inference fashions similar to Qwen3-30B-A3B-Considering-2507, and a 60% enhance in throughput was noticed in comparison with the bottom implementation. Builders may convey their very own inference fashions and expertise related efficiency enhancements utilizing Clarifai’s compute orchestration and kernel optimization stack.

At its core, the Clarifai Reasoning Engine represents a brand new customary for operating inference and agent AI workloads that’s sooner, cheaper, adaptable, and open to any mannequin.

Attempt the GPT-OSS-120B mannequin immediately on Clarifai and expertise the efficiency of Clarifai Reasoning Engine. You may also convey your personal mannequin. Discuss to an AI knowledgeable Apply these adaptive optimizations and see how throughput and latency enhance on real-world workloads.

device equipment

Added help for initializing fashions utilizing vLLM, LMStudio, and hug face A toolkit for native runners.

hug face device equipment

Added Hugging Face toolkit to Clarifai CLI. This makes it simple to initialize, customise, and serve Hugging Face fashions by way of Native Runner.

Supported Hugface fashions can now be downloaded and run immediately by yourself {hardware}, together with laptops, workstations, and edge packing containers, and securely uncovered via Clarifai’s public API. Fashions run regionally, knowledge is stored personal, and the Clarifai platform handles routing, authentication, and governance.

Causes to make use of Hug Face Toolkit:

Use native computing – Run openweight fashions by yourself GPU or CPU whereas remaining accessible via the Clarifai API.

Privateness safety – All inference is completed on the machine. Solely metadata passes via Clarifai’s safe management airplane.

Skip handbook setup – Initialize the mannequin listing with one CLI command. Dependencies and configurations are routinely scaffolded.

Step-by-step: Run the cuddling face mannequin regionally

1. Set up Clarifai CLI

Be sure to have Python 3.11 or greater and the newest Clarifai CLI put in.

2. Authenticate with Clarifai

Log in and create a configuration context in your native runner.

You may be requested to enter your consumer ID, app ID, and private entry token (PAT). These can be set as surroundings variables.

3. Get a Hugface entry token

In case you are utilizing the personal repository mannequin, create the token at: hugface.co/settings/tokens and export it:

4. Initialize the mannequin utilizing Hugging Face Toolkit

Use the brand new CLI flag –toolkit huggingface to scaffold the mannequin listing.

This command generates a ready-to-run folder containing mannequin.py, config.yaml, and necessities.txt, pre-configured for the native runner. You possibly can modify mannequin.py to fine-tune the habits or change checkpoints in config.yaml.

5. Set up dependencies

6. Begin a neighborhood runner

When a runner registers with Clarifai, the CLI outputs a ready-to-use public API endpoint.

7. Take a look at the mannequin

Just like Clarifai-hosted fashions, they are often known as by way of the SDK.

Requests are routed to your native machine behind the scenes. The mannequin runs completely on {hardware}. For a whole setup information, configuration choices, and troubleshooting ideas, see the Hugging Face Toolkit documentation.

vLLM Toolkit

Run the hug face mannequin on the high-performance vLLM inference engine

vLLM is an open-source runtime optimized to ship massive language fashions with excessive throughput and reminiscence effectivity. Not like typical runtimes, vLLM makes use of steady batch processing and superior GPU scheduling to realize sooner and cheaper inference. That is good for native deployment and experimentation.

Clarifai’s vLLM toolkit permits you to leverage vLLM’s optimized backend to initialize and run Hugging Face suitable fashions by yourself machine. The fashions run regionally, however behave equally to hosted Clarifai fashions via safe public API endpoints.

See the vLLM toolkit documentation for data on easy methods to initialize a vLLM mannequin and serve it with a neighborhood runner.

LM studio toolkit

Run open weight fashions from LM Studio and publish by way of Clarifai API

LM Studio is a well-liked desktop software for operating and chatting with open supply LLM regionally. No web connection required. Clarifai’s LM Studio toolkit permits you to join regionally operating fashions to the Clarifai platform and name them by way of public APIs whereas protecting knowledge and execution utterly on-device.

Builders can use this integration to increase LM Studio fashions to production-ready APIs with minimal setup.

Learn the LM Studio Toolkit information to learn to run LM Studio fashions utilizing supported setups and native runners.

New fashions on the platform

We have added a number of highly effective new fashions optimized for inference, long-context duties, and multimodal options.

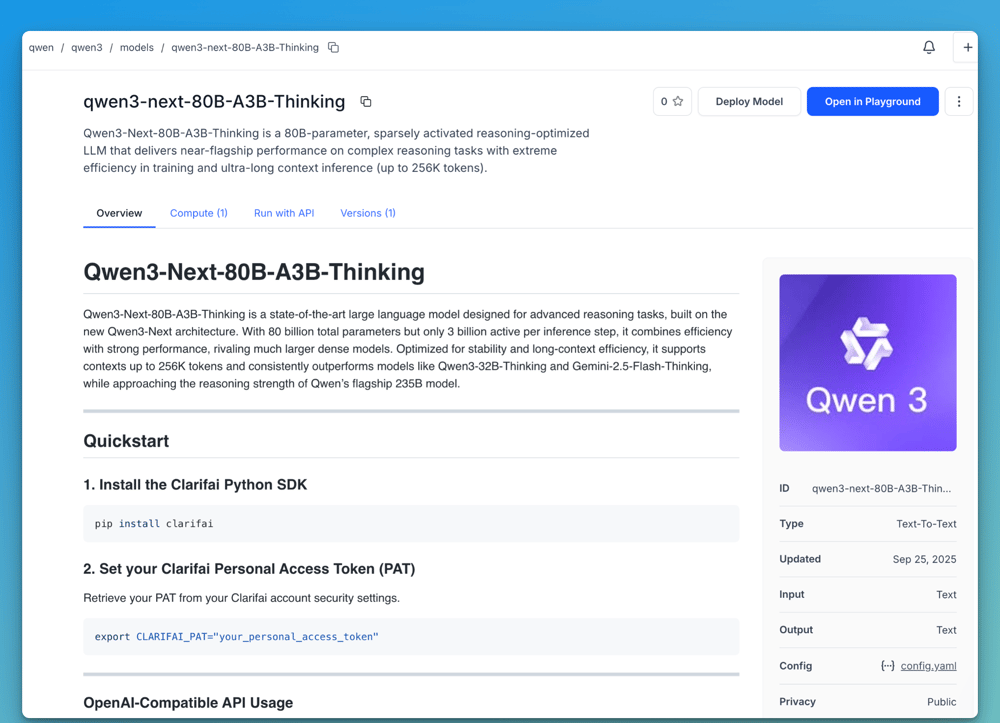

Qwen3-Subsequent-80B-A3B-Considering – A sparsely activated inference mannequin with 80B parameters that gives near-flagship efficiency on advanced duties with excessive effectivity in coaching and really lengthy context inference (as much as 256,000 tokens).

Qwen3-30B-A3B-Instruct-2507 – 256K token lengthy context processing for enhanced understanding, coding, multilingual information, and consumer adjustment. Qwen3-30B-A3B-Considering-2507 – Additional enhancements in reasoning, common means, coordination, and lengthy context understanding.

New cloud cases: B200 and GH200

We have added new cloud cases to offer builders extra choices for GPU-based workloads.

B200 Cases – Operated from Seattle and competitively priced.

GH200 cases – leverage Vultr for high-performance duties.

Be taught extra about and request entry to enterprise-grade GPU internet hosting in your AI fashions, or contact an AI knowledgeable to debate your workload wants.

Extra adjustments

Prepared to start out constructing?

Clarifai Reasoning Engine permits you to run inference and agent AI workloads sooner, extra effectively, and at decrease value whereas sustaining full management of your fashions. The Reasoning Engine repeatedly optimizes throughput and latency, whether or not you are utilizing GPT-OSS-120B, the Qwen mannequin, or your personal customized mannequin.

Deliver your personal fashions and see how adaptive optimization can enhance the efficiency of real-world workloads. Discuss to an AI knowledgeable Learn the way the Clarifai Reasoning Engine optimizes the efficiency of your customized fashions.