This tutorial reveals the way to use PydanticAI to design a contract-first agent decision-making system that treats structured schemas as non-negotiable governance contracts relatively than non-obligatory output codecs. We present the way to outline rigorous decision-making fashions that encode coverage compliance, threat evaluation, confidence adjustment, and executable subsequent steps instantly into the agent’s output schema. Pydantic validators, mixed with PydanticAI’s retry and self-correction mechanisms, be certain that brokers can’t make logically inconsistent or non-compliant choices. All through our workflow, we deal with constructing enterprise-grade decision-making brokers that cause underneath constraints, making them appropriate for real-world threat, compliance, and governance eventualities relatively than toy prompt-based demos. Try the whole code right here.

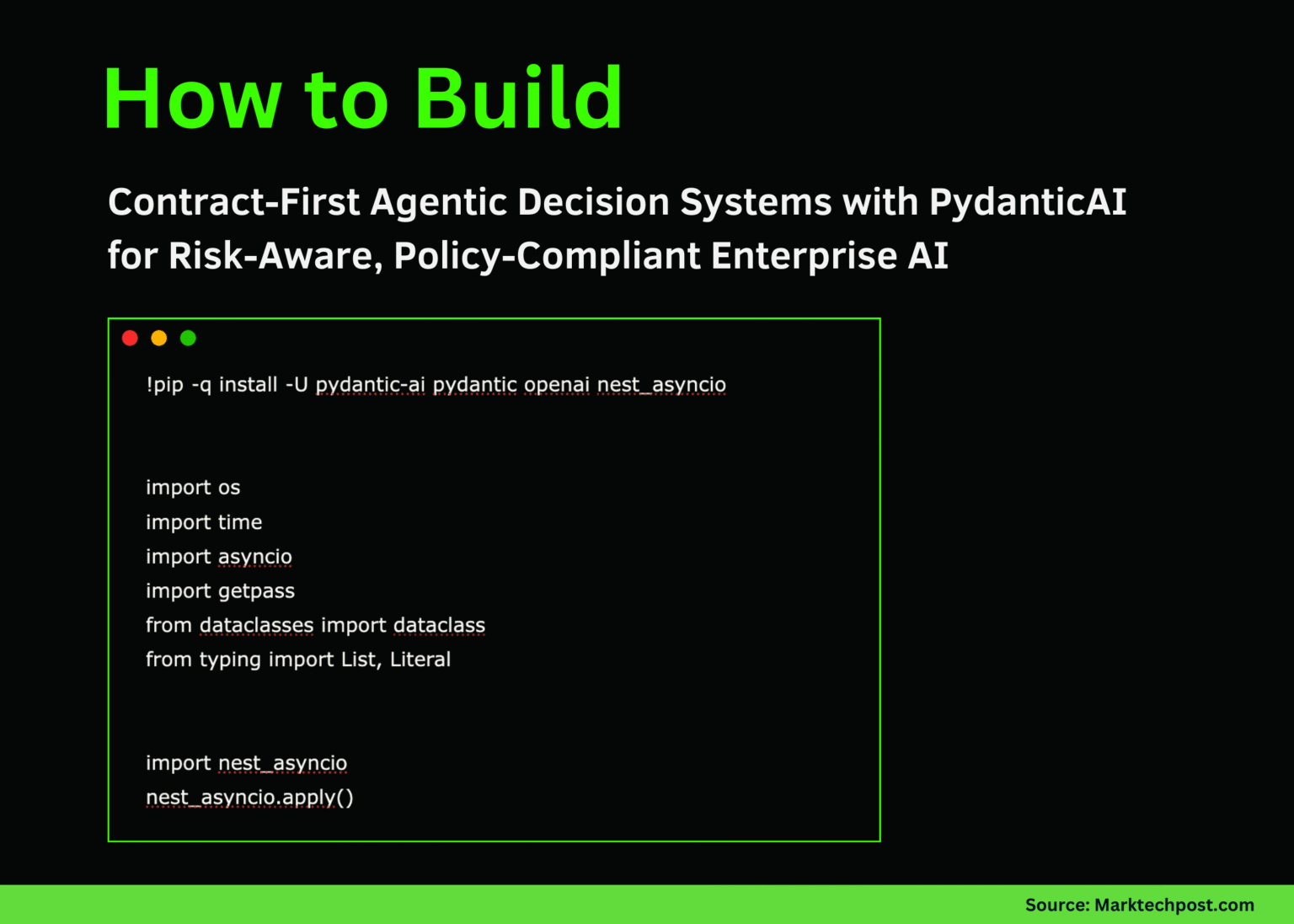

Arrange the execution surroundings by putting in the required libraries and configuring Google Colab for asynchronous execution. Securely load your OpenAI API key and be certain that the runtime is able to deal with asynchronous agent calls. This establishes a secure basis for operating contract-first brokers with out environment-related points. Try the whole code right here.

Mitigation: str = Area(…, min_length=12) class DecisionOutput(BaseModel): choice: literal[“approve”, “approve_with_conditions”, “reject”]

Confidence: float = Area(…, ge=0.0, le=1.0) Rationale: str = Area(…, min_length=80) Recognized dangers: Checklist[RiskItem] = discipline(…, min_length=2) compliance path: boolean situation: record[str] = discipline(default_factory=record) next_steps: record[str] = Area(…, min_length=3) timestamp_unix: int = Area(default_factory=lambda: int(time.time())) @field_validator(“confidence”) @classmethod defconfidence_vs_risk(cls, v, information):dangers = information.information.get(“identified_risks”) or []

if any(r.severity == “excessive” for r in Dangers) and v > 0.70: increase ValueError(“Confidence is just too excessive for prime severity dangers”) return v @field_validator(“Choice”) @classmethod def destroy_if_non_compliance(cls, v, information): if information.information.get(“compliance_passed”) is False and v != “reject”: increase ValueError(“Non-compliant choices should be rejected”) return v @field_validator(“circumstances”) @classmethod defconditions_required_for_conditional_approval(cls, v, information): d = information.information.get(“choice”) if d == “approve_with_conditions” and (not v or len(v) < 2): increase ValueError("approve_with_conditions has a minimum of two circumstances") if d == "authorization" and v: increase ValueError("authorization should not comprise circumstances") return v

We outline our core choice contract utilizing a rigorous Pydantic mannequin that precisely describes legitimate choices. Encode logical constraints similar to belief and threat changes, compliance-driven rejections, and conditional approvals instantly into your schema. This forces the agent’s output to fulfill enterprise logic in addition to syntactic construction. Try the whole code right here.

Inject the enterprise context via a typed dependency object and initialize the PydanticAI agent powered by OpenAI. Configure the agent to solely produce structured choice output that adheres to predefined contracts. This step formalizes the separation of enterprise context and mannequin inference. Try the whole code right here.

Add an output validator to behave as a governance checkpoint after the mannequin produces a response. Power brokers to establish significant dangers and explicitly reference particular safety controls when making compliance claims. Violating these constraints will set off computerized retries and power self-correction. Try the whole code right here.

Run the agent towards real looking choice requests and seize validated, structured output. Reveals how brokers consider threat, coverage compliance, and trustworthiness earlier than making a ultimate choice. This completes the end-to-end contract precedence decision-making workflow in operational model setup.

In conclusion, we present how PydanticAI can be utilized to maneuver from free-form LLM output to a managed and dependable decision-making system. We present that by implementing laborious contracts on the schema stage, choices could be routinely aligned with coverage necessities, threat severity, and belief realism with out guide immediate tuning. This method permits us to construct brokers that fail safely, self-correct when constraints are violated, and produce structured, auditable output that downstream methods can belief. In the end, we demonstrated that contract-first agent design permits agent AI to be deployed as a trusted decision-making layer inside manufacturing and enterprise environments.

Try the whole code right here. Additionally, be happy to observe us on Twitter. Additionally, remember to hitch the 100,000+ ML SubReddit and subscribe to our publication. hold on! Are you on telegram? Now you can additionally take part by telegram.

Asif Razzaq is the CEO of Marktechpost Media Inc. As a visionary entrepreneur and engineer, Asif is dedicated to harnessing the potential of synthetic intelligence for social good. His newest endeavor is the launch of Marktechpost, a synthetic intelligence media platform. It stands out for its thorough protection of machine studying and deep studying information, which is technically sound and simply understood by a large viewers. The platform boasts over 2 million views per 30 days, demonstrating its recognition amongst viewers.