On this tutorial, we discover how brokers can internalize planning, reminiscence, and gear utilization inside a single neural mannequin, somewhat than counting on exterior orchestration. We design compact model-native brokers that study to carry out arithmetic reasoning duties via reinforcement studying. By combining a community of stage-aware actors and critics with a curriculum of more and more complicated environments, we allow brokers to find find out how to use internalized “instruments” and short-term reminiscence to reach on the proper answer end-to-end. We observe step-by-step how studying evolves from easy reasoning to multi-step compositional conduct. Try the whole code right here.

If stage==0: ctx=[a,b,c];goal=a*b+c elif stage==1: ctx=[a,b,c,d];goal=(a*b+c)-d else: ctx=[a,b,c,d,e]; goal=(a*b+c)-(d*e) return ctx, goal, (a,b,c,d,e) def step_seq(self, motion, abc, stage): a,b,c,d,e = abc;final=none; mem=none;step=0;form=0.0 Aim 0=a*b;Aim 1=Aim 0+c;Aim 2=Aim 1-d;Aim 3=d*e;For acts inside actions, goal4=goal1-goal3:steps+=1 if act==MUL: final=(a*b if final is None else final*(d if stage>0 else 1)) elif act==ADD and final is just not None: final+=c elif act==SUB and final is just not None: final -= (e if stage==2 and mem==”use_d” else (d if stage>0 else 0)) elif act==STO: mem=”use_d” if stage>=1 else “okay” elif act==RCL and mem is just not None: final = (d*e) if (stage==2 and mem==”use_d”) else (final if final else 0) elif act==ANS: goal=[goal0,goal1,goal2,goal4][stage] if stage==2 else [goal0,goal1,goal2][stage]

Appropriate=(final==goal) If stage==0: Formed += 0.25*(final==goal0)+0.5*(final==goal1) If stage==1: Formed += 0.25*(final==goal0)+0.5*(final==goal1)+0.75*(final==goal2) If stage==2: formed += 0.2*(final==goal0)+0.4*(final==goal1)+0.6*(final==goal4)+0.6*(final==goal3) return (1.0 if right, 0.0 if not)+0.2*form, step if steps>=self.max_steps: break return 0.0, step

First, arrange the setting and outline the symbolic instruments obtainable to the agent. We create a small artificial world the place every motion, reminiscent of multiplication, addition, and subtraction, acts as an inner instrument. This setting can simulate reasoning duties the place an agent should plan the order during which instruments are used to reach on the right reply. Try the whole code right here.

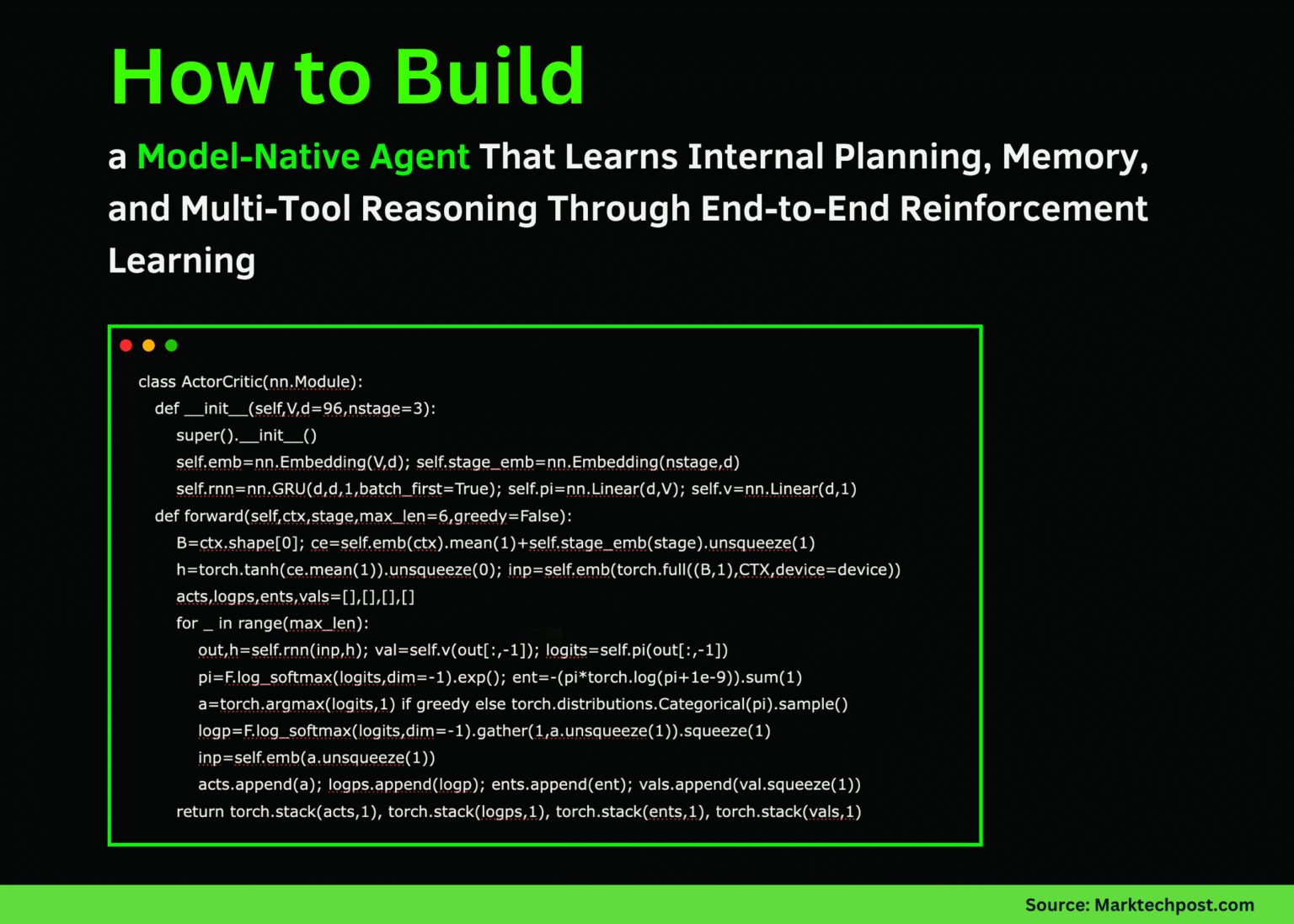

for _ in vary(max_len): out,h=self.rnn(inp,h); val=self.v(out[:,-1]); logits=self.pi(out[:,-1]) pi=F.log_softmax(logits,dim=-1).exp(); ent=-(pi*torch.log(pi+1e-9)).sum(1) a=torch.argmax(logits,1) torch.distributions.Categorical(pi).pattern() if grasping logp=F.log_softmax(logits,dim=-1).collect(1,a.unsqueeze(1)).squeeze(1) inp=self.emb(a.unsqueeze(1)) act.append(a); logps.append(logp); ents.append(ent); vals.append(val.squeeze(1)) return torch.stack(acts,1), torch.stack(logps,1), torch.stack(ents,1), torch.stack(vals,1)

Subsequent, design model-native insurance policies utilizing an actor-critical construction constructed round GRU. Embedding each tokens and job phases permits the community to adapt the depth of inference relying on the complexity of the duty. This configuration permits brokers to contextually study when and find out how to use inner instruments inside a single unified mannequin. Try the whole code right here.

for _ in vary(batch): c,t,abc=env.pattern(stage); ctxs.append(c);metas.append((t,abc)) ctx=pad_batch(ctxs); stage_t=torch.full((batch,),stage,system=system,dtype=torch.lengthy) act,logps,ents,vals=web(ctx,stage_t,max_len=6,grasping=grasping) Reward=[]

for i in vary(batch): traj = act[i].tolist() abc = metas[i][1]

r,_ = env.step_seq(traj,abc,stage) award.append(r) R=torch.tensor(rewards,system=system).float() adv=(R-vals.sum(1)).detach() If not skilled: return R.imply().merchandise(), 0.0 pg=-(logps.sum(1)*adv).imply(); vloss=F.mse_loss(vals.sum(1),R); ent=-ents.imply() loss=pg+0.5*vloss+0.01*ent decide.zero_grad(); loss.backward(); nn.utils.clip_grad_norm_(web.parameters(),1.0); decide.step() return R.imply().merchandise(), loss.merchandise()

Implement a reinforcement studying coaching loop utilizing Benefit Actor-Critic (A2C) updates. Practice brokers end-to-end throughout batches of artificial issues and replace insurance policies and worth networks concurrently. Right here we incorporate entropy regularization to facilitate exploration and stop untimely convergence. Try the whole code right here.

For ep in vary (1,61): stage=phases[min((ep-1)//10,len(stages)-1)]

acc,loss=run_batch(stage,batch=192,practice=True) if eppercent5==0: with torch.no_grad(): evals=[run_batch(s,train=False,greedy=True)[0] for [0,1,2]]print(f”ep={ep:02d} stage={stage} acc={acc:.3f} | eval T0={evals[0]:.3f} ” f”T1={evals[1]:.3f} T2={evals[2]:.3f} loss={loss:.3f}”)

We start the first coaching course of utilizing a curriculum technique that steadily will increase job problem. Throughout coaching, we consider the agent at each stage and observe its capability to generalize from easier to extra complicated inference steps. Printed metrics present how your inner plan improves over time. Try the whole code right here.

Lastly, we study the skilled agent and output an instance inference trajectory. Visualize the sequence of instrument tokens chosen by the mannequin and confirm whether or not it reaches the proper end result. Lastly, we consider the general efficiency and present that the mannequin efficiently integrates planning, reminiscence, and reasoning into internalized processes.

In conclusion, we present that neural networks may also study internalized planning and gear utilization conduct when skilled with reinforcement indicators. We have now efficiently moved past conventional pipeline-style architectures the place reminiscence, planning, and execution are separated to model-native brokers that combine these parts as a part of discovered dynamics. This strategy represents a shift in agent AI and reveals how end-to-end studying can produce emergent inference and self-organizing decision-making with out the necessity for hand-crafted management loops.

Try the whole code right here. Be happy to go to our GitHub web page for tutorials, code, and notebooks. Additionally, be happy to observe us on Twitter. Additionally, do not forget to affix the 100,000+ ML SubReddit and subscribe to our publication. dangle on! Are you on telegram? Now you can additionally take part by telegram.

Asif Razzaq is the CEO of Marktechpost Media Inc. As a visionary entrepreneur and engineer, Asif is dedicated to harnessing the potential of synthetic intelligence for social good. His newest endeavor is the launch of Marktechpost, a synthetic intelligence media platform. It stands out for its thorough protection of machine studying and deep studying information, which is technically sound and simply understood by a large viewers. The platform boasts over 2 million views monthly, demonstrating its recognition amongst viewers.

🙌 Observe MARKTECHPOST: Add us as your most well-liked supply on Google.