Giant-scale AI fashions are quickly scaling, and bigger architectures and longer coaching runtimes have gotten the norm. Nevertheless, because the mannequin grows, elementary coaching stability points stay unresolved. DeepSeek mHC instantly addresses this drawback by rethinking how residual connectivity works at scale. This text describes DeepSeek mHC (Manifold-Constrained Hyper-Connections) and reveals the way it can enhance the soundness and efficiency of coaching giant language fashions with out unnecessarily complicating the structure.

The hidden drawback of residual connections and hyperconnections

Residual connectivity has been a core constructing block of deep studying for the reason that launch of ResNet in 2016. Residual connections permit the community to create shortcut paths, permitting info to circulation instantly by way of the layers as an alternative of being relearned at every step. Merely put, it acts like an specific lane on a freeway, making it simpler to coach deep networks.

This method has labored effectively for a few years. However as fashions scale up from thousands and thousands to billions and now a whole lot of billions of parameters, their limitations have develop into obvious. To additional enhance efficiency, researchers launched hyperconnections (HCs) to successfully prolong these info highways by including extra paths. Efficiency improved considerably, however stability didn’t.

My coaching has develop into very erratic. The mannequin trains usually, after which abruptly collapses round a sure step, leading to sharp loss spikes and explosive gradients. For groups coaching giant language fashions, this sort of failure can imply a variety of wasted compute, time, and assets.

What’s a manifold constraint hyperconnection (mHC)?

This can be a common framework that maps the remaining connectivity house of the HC to a particular manifold to reinforce its identification mapping properties, whereas on the identical time entailing rigorous infrastructure optimizations to extend effectivity.

Empirical checks present that mHC is appropriate for large-scale coaching and offers not solely apparent efficiency enhancements but additionally good scalability. We count on mHC to be a flexible and accessible addition to HC, aiding our understanding of topological structure design and proposing new avenues for the event of elementary fashions.

What makes mHC completely different?

DeepSeek’s technique just isn’t solely good, it is an awesome technique that makes you surprise, “Wow, why did not anybody consider this earlier than?” They nonetheless maintained hyperconnections, however used exact mathematical methods to restrict them.

That is the technical half (do not surrender, it is definitely worth the time to know). The usual remaining connections mean you can carry out so-called “identification mapping”. Consider it because the regulation of conservation of vitality when indicators are transmitted on the identical energy stage by way of a community. When HC elevated the width of the residual stream and mixed it with a learnable connectivity sample, it unintentionally violated this property.

DeepSeek researchers realized that HC’s composite mapping primarily boosts the sign by an element of 3000 or extra as you proceed to stack these connections layer by layer. Each time you’ve got an interplay and somebody delivers your message, your entire room shouts that message 3000 instances louder on the identical time. It’s nothing however chaos.

mHC solves the issue by projecting these connectivity matrices onto Birkhoff polytopes, that are summary geometric objects the place the sum of every row and column equals 1. Though it could appear theoretical, in apply it forces the community to deal with sign propagation as a convex mixture of options. There aren’t any explosions, and no sign disappears fully.

Structure: How mHC really works

Let’s take a better take a look at how DeepSeek modified the connections within the mannequin. The design depends on three principal mappings that decide the path of data.

Three mapping techniques

In hyperconnections, three learnable matrices carry out completely different duties.

H_pre: Ingest info from the augmented residual stream into the layer. H_post: Sends the layer’s output again to the stream. H_res: Combines and refreshes info within the stream itself.

Visualize this as a freeway system. H_pre is the doorway ramp, H_post is the exit ramp, and H_res is the site visitors circulation supervisor between lanes.

One of many outcomes of DeepSeek’s ablation analysis could be very attention-grabbing. H_res (the mapping utilized to the residuals) is the principle driver of efficiency enchancment. They turned it off, solely permitting pre-mapping and post-mapping, and the efficiency dropped dramatically. That is logical. The spotlight of the method is when options at completely different depths work together and trade info.

manifold constraint

That is the purpose at which mHC begins to deviate from regular HC. Moderately than permitting H_res to be chosen arbitrarily, we pressure it to be doubly probabilistic. That is the property that each one rows and all columns sum to 1.

Why is that this necessary? There are three principal causes:

The norm stays the identical: the spectral norm stays inside unity, so the gradient by no means explodes. Closure by composition: Doubling a doubly stochastic matrix produces one other doubly stochastic matrix. Due to this fact, the general community depth remains to be steady. Rationalization from a geometrical standpoint: The matrix lies inside a Birkhoff polyhedron, which is the convex hull of all permutation matrices. In different phrases, the community learns weighted mixtures of routing patterns during which info flows in a different way.

The Sinkhorn-Knopp algorithm is the algorithm used to implement this constraint, and is an iterative method that continues to normalize rows and columns alternately till the specified accuracy is reached. Experiments have confirmed that 20 iterations present a superb approximation with out extreme computation.

Parameterization particulars

The execution is sensible. As a substitute of processing a single characteristic vector, mHC compresses your entire n×C hidden matrix into one vector. This enables full context info for use in dynamic mapping calculations.

The final constrained mapping is utilized.

Sigmoid activation of H_pre and H_post (guaranteeing non-negativity) Thinhorn-Knop projection of H_res (thus forcing double stochasticity) Small initialization worth of gate coefficients to start out conservatively (α = 0.01)

This configuration prevents sign cancellation brought on by interactions between constructive and adverse coefficients, whereas preserving the all-important identification mapping property.

Scaling habits: Is it sustainable?

Some of the stunning issues is how the advantages of mHC are magnified. DeepSeek carried out experiments in three completely different dimensions.

Scaling calculations: They educated to 3B, 9B, and 27B parameters utilizing proportional information. The efficiency profit stays the identical and will increase barely with greater compute budgets. That is stunning as a result of many architectural tips that often work on a small scale do not work when scaled up. Token scaling: They monitored the efficiency all through the coaching of a 3B mannequin educated with 1 trillion tokens. The loss enchancment is steady from very early coaching to the convergence part, indicating that the advantages of mHC will not be restricted to the early coaching interval. Propagation Evaluation: Keep in mind the 3000x sign amplification issue of vanilla HC? With mHC, the utmost achieve magnitude was decreased to about 1.6 and stabilized by 3 orders of magnitude. Even after configuring greater than 60 layers, the ahead and backward sign good points remained effectively managed.

Efficiency benchmark

DeepSeek evaluated mHC on completely different fashions with parameter sizes various from 3 billion to 27 billion, and the development in stability was significantly noticeable.

The coaching loss was clean with no sudden spikes all through the method The slope standards remained in the identical vary, in distinction to HC, which exhibited tough habits Most significantly, not solely did the efficiency enhance, however it improved throughout a number of benchmarks

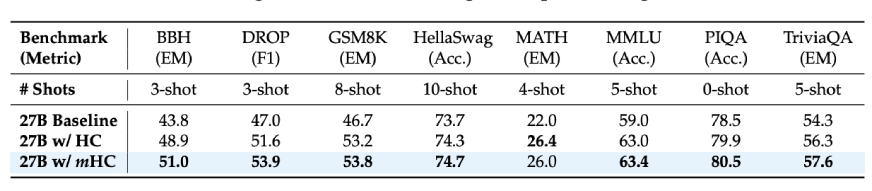

Contemplating the outcomes of the downstream duties of the 27B mannequin:

BBH Reasoning Process: 51.0% (vs. Baseline 43.8%) DROP Studying Comprehension: 53.9% (vs. Baseline 47.0%) GSM8K Math Drawback: 53.8% (vs. Baseline 46.7%) MMLU Data: 63.4% (vs. Baseline 59.0%)

These don’t signify small enhancements, however we are literally speaking a couple of 7-10 level enchancment on tough inference benchmarks. Furthermore, these enhancements had been seen not solely as much as bigger fashions, but additionally throughout longer coaching durations, as is the case with deep studying mannequin scaling.

Additionally learn: DeepSeek-V3.2-Exp: 50% cheaper, 3x quicker, greatest worth

conclusion

If you’re engaged on or coaching giant language fashions, mHC is certainly a facet to think about. This is among the uncommon papers that identifies an actual drawback, presents a mathematically legitimate answer, after which proves that it really works at scale.

The principle findings are:

Rising the residual stream width improves efficiency. Nevertheless, the naive technique introduces instability Restricts interactions to doubly stochastic matrices Preserves the property of identification mapping If completed correctly, the overhead is hardly noticeable This benefit could be reapplied to fashions with tens of billions of parameters

Moreover, mHC reminds us that architectural design stays a crucial issue. The query of methods to use extra computing and information will not final without end. Typically you simply have to take a step again, perceive the explanation for the large failure, and proper it appropriately.

To be sincere, such analysis is what I like most. Moderately than small modifications, we’d like large modifications that make the entire subject somewhat extra strong.

Log in to proceed studying and revel in content material hand-picked by our specialists.

Proceed studying totally free