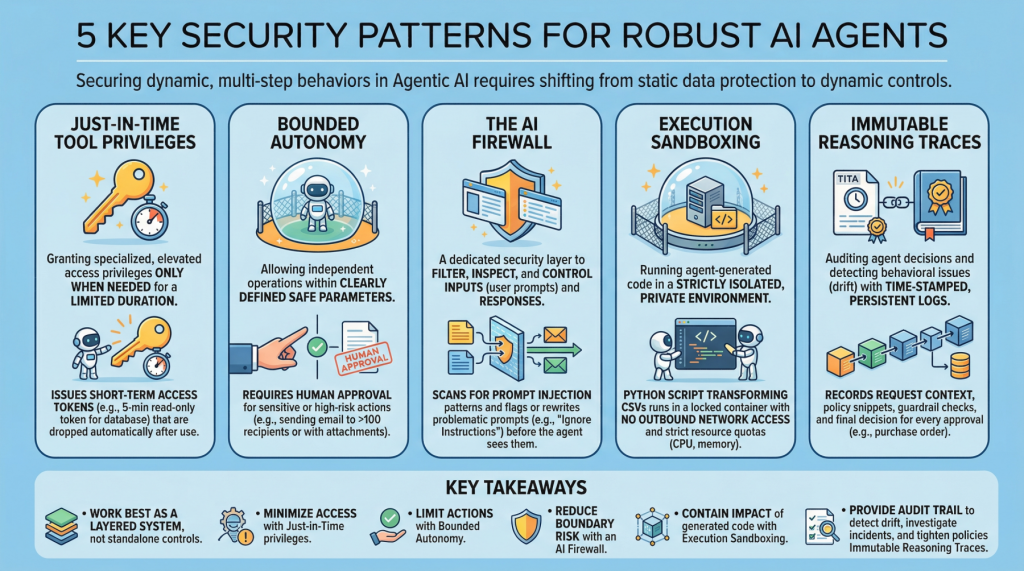

5 safety patterns important for sturdy agent AI

Picture by editor

introduction

Agenttic AI, which revolves round autonomous software program entities known as brokers, has reshaped the AI panorama and impressed lots of the most outstanding developments and traits of current years, together with purposes constructed on generative and language fashions.

Waves of main applied sciences like agent AI include the necessity to safe these programs. This requires a shift from static information safety to dynamic, multi-step behavioral safety. This text lists 5 key safety patterns for sturdy AI brokers and highlights why they’re vital.

1. Simply-in-time software permissions

Usually abbreviated as JIT, it’s a safety mannequin that grants particular or elevated permissions to customers or purposes solely when wanted and for a restricted time period. That is in distinction to conventional persistent permissions, which stay in place except manually modified or revoked. Within the discipline of agent AI, an instance is issuing short-term entry tokens to restrict the “blast radius” if an agent is compromised.

Instance: Earlier than an agent runs a billing adjustment job, it requests a narrow-scope, 5-minute read-only token towards a single database desk, and mechanically deletes the token as quickly because the question completes.

2. Restricted autonomy

This safety precept permits AI brokers to function independently inside restricted settings, i.e., inside well-defined and safe parameters, balancing management and effectivity. That is particularly vital in high-risk eventualities the place requiring human approval for delicate actions can keep away from catastrophic errors with full autonomy. In impact, this creates a management airplane that reduces danger and helps compliance necessities.

For instance: Brokers can draft and schedule outbound emails on their very own, however messages to greater than 100 recipients (or with attachments) are routed to a human for approval earlier than sending.

3. AI firewall

This refers to a devoted safety layer that filters, inspects, and controls inputs (consumer prompts) and subsequent responses to guard AI programs. This helps shield towards threats resembling instantaneous injections, information leaks, and dangerous or policy-violating content material.

Instance: Incoming prompts are scanned for immediate insertion patterns (for instance, requests that ignore advance directions or reveal secrets and techniques), and flagged prompts are blocked or rewritten to a safer format earlier than being reviewed by brokers.

4. Execution sandboxing

Run agent-generated code inside a strictly remoted non-public setting or community perimeter. This is called an execution sandbox. Helps forestall unauthorized entry, useful resource exhaustion, and potential information breaches by limiting the consequences of unreliable or unpredictable execution.

Instance: An agent that writes a Python script to transform a CSV file runs the script inside a locked-down container with no outbound community entry, strict CPU/reminiscence quotas, and read-only mounts for enter information.

5. Immutable inference traces

This follow helps auditing the selections of autonomous brokers and detecting behavioral points resembling drift. This requires constructing time-stamped, tamper-explicit, persistent logs that seize agent enter, key intermediate artifacts utilized in decision-making, and coverage checks. This is a vital step in the direction of transparency and accountability for autonomous programs, particularly in high-stakes software areas resembling procurement and finance.

Instance: For each buy order that an agent approves, file the context of the request, any coverage snippets captured, any guardrail checks utilized, and the ultimate resolution in a write-once log that may be independently verified throughout an audit.

Necessary factors

These patterns work finest as a layered system reasonably than a standalone management. Privileges for just-in-time instruments decrease what brokers can entry at any given time, whereas restricted autonomy limits the actions brokers can take with out being noticed. AI firewalls scale back danger at interplay boundaries by filtering and shaping inputs and outputs, and execution sandboxes embrace the consequences of code generated or executed by brokers. Lastly, immutable inference traces present an audit path that means that you can detect drift, examine incidents, and constantly implement insurance policies over time.

Safety Sample Description Simply-in-time Device Permissions Grant short-lived, narrow-scope entry solely when mandatory to scale back the explosive radius of a breach. Bounded autonomy limits the actions brokers can carry out independently and routes delicate steps by way of authorization and guardrails. AI firewalls filter and examine prompts and responses to dam or neutralize threats resembling immediate injections, information leaks, and dangerous content material. Execution Sandboxing Run agent-generated code in an remoted setting with strict useful resource and entry controls to restrict hurt. Immutable Inference Traces Create time-stamped, tamper-proof logs of inputs, intermediate artifacts, and coverage checks for auditability and drift detection.

These limitations scale back the chance {that a} single failure will escalate into an total breach, with out compromising the operational advantages that make agent AI so interesting.